c6eacc8

Adam's Bridge Hardware Specification

Version 1.0.2

Scope

This document defines technical specifications for a Adam's Bridge Post-Quantum Cryptography (PQC ML-DSA) subsystem used in the Open Compute Project (OCP). This document shall comprise the Adam's Bridge technical specification.

Overview

This document provides definitions and requirements for a Adam's Bridge Post-Quantum Cryptography (PQC ML-DSA) subsystem. The document then relates these definitions to existing technologies, enabling device and platform vendors to better understand those technologies in trusted computing terms.

Introduction

The advent of quantum computers poses a serious challenge to the security of cloud infrastructures and services, as they can potentially break the existing public-key cryptosystems, such as RSA and elliptic curve cryptography (ECC). Even though the gap between today’s quantum computers and the threats they pose to current public-key cryptography is large, the cloud landscape should act proactively and initiate the transition to the post-quantum era as early as possible. To comply with that, the U.S. government issued a National Security Memorandum in May 2022 that mandated federal agencies to migrate to PQC by 2035 [1].

The long-term security of cloud computing against quantum attacks depends on developing lattice-based cryptosystems, which are among the most promising PQC algorithms that are believed to be hard for both classical and quantum computers. The American National Institute of Standards and Technology (NIST) recognized this and selected CRYSTALS-KYBER (ML-KEM) and CRYSTALS-Dilithium (ML-DSA) [2], two lattice-based algorithms, as standards for post-quantum key-establishment and digital signatures, respectively, in July 2022. These cryptosystems are constructed on the hardness of the module learning-with-errors problem (M-LWE) in module lattices.

To transition to PQC, we must develop hybrid cryptosystems to maintain industry or government regulations, while PQC updates will be applied thoroughly. Therefore, classical cryptosystems, e.g. ECC, cannot be eliminated even if PQC will significantly be developed.

Adam’s bridge was a mythological structure that existed to cross the formidable gulf that existed between two land masses. Asymmetric cryptography to post quantum is a similar formidable gap that exists in the world of cryptography and Adam’s bridge is the work undertaken to bridge the gap by building post quantum cryptographic accelerators.

In this presentation, we share the architectural characteristics of our post-quantum Adams Bridge implementation. Our proposed work divides the operations in the algorithms into multiple stages and executes them using pipelined processing architecture. We use an optimized cascading method within each stage and fine-tune each module individually to exploit multi-levels of parallelism to accelerate post-quantum Dilithium computation on hardware platforms to address performance and complexity challenges of PQC implementation. Our proposed architecture uses various optimization techniques, including multi-levels of parallelism, designing reconfigurable cores, and implementing interleaved and pipelined architecture achieving significant speedup while maintaining high security and scalability. Our work can facilitate the adoption and deployment of PQC in cloud computing and enhance the security and efficiency of cloud services and applications in the post-quantum era.

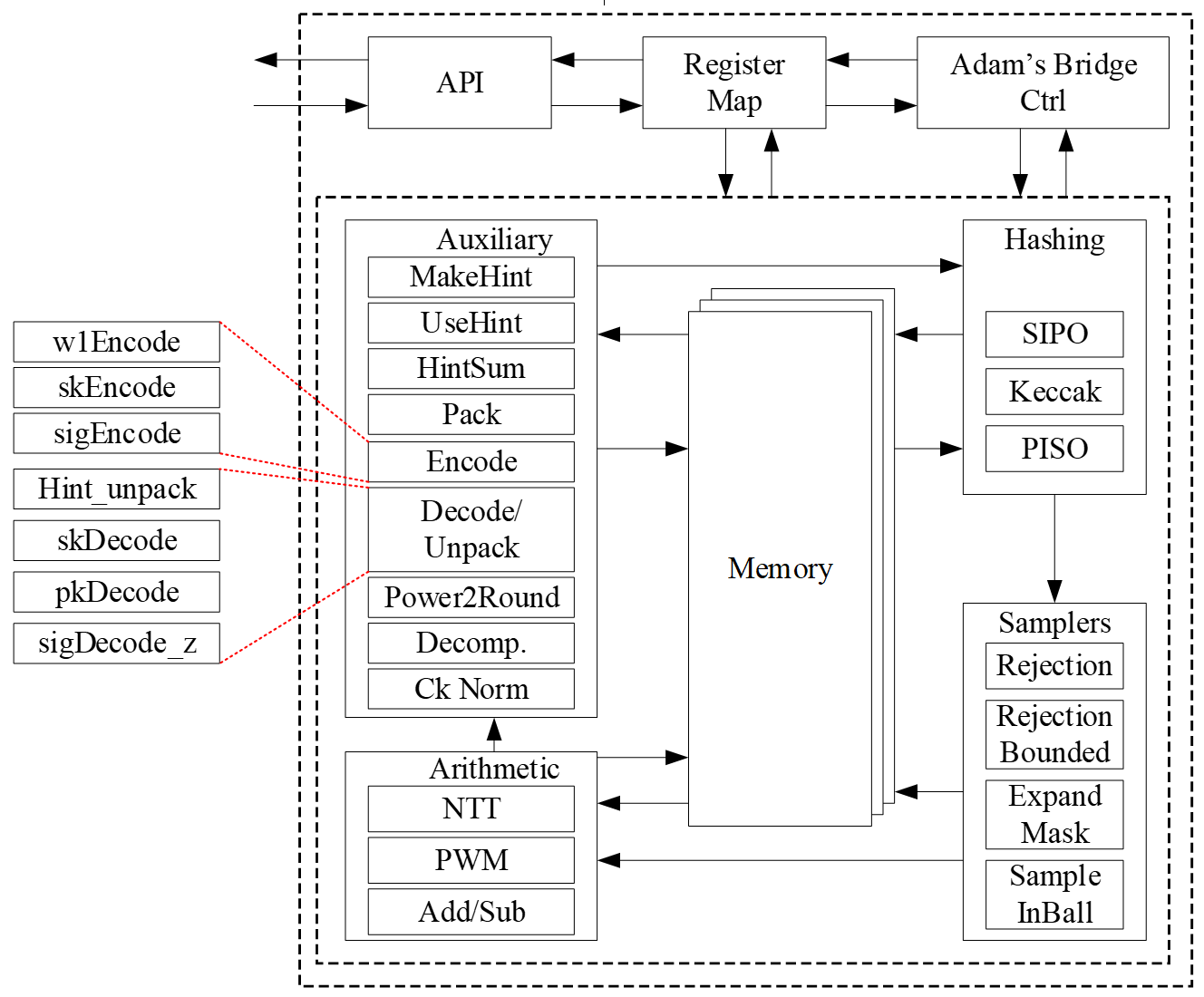

High-Level Overview

Adam’s Bridge accelerator has all the necessary components to execute a pure hardware PQC operation. The main operations that involve more computational complexity, such as NTT, hashing, and sampling units, are explained as follows.

The security level of ML-DSA defined by NIST are as follows:

| Algorithm Name | Security Level |

|---|---|

| ML-DSA-44 | Level-2 |

| ML-DSA-65 | Level-3 |

| ML-DSA-87 | Level-5 |

CNSA 2.0 only allows the highest security level (Level-5) for PQC which is ML-DSA-87, and Adams Bridge only supports ML-DSA-87 parameter set.

API

The ML-DSA-87 architecture inputs and outputs are described in the following table.

| Name | Input/Output | Operation | Size (Byte) |

|---|---|---|---|

| name | Output | All | 8 |

| version | Output | All | 8 |

| ctrl | Input | All | 4 |

| status | Output | All | 4 |

| entropy (SCA) | Input | All | 64 |

| seed | Input | Keygen | 32 |

| sign_rnd | Input | Sign | 32 |

| message | Input | Sign/Verify | 64 |

| verification result | Output | Verify | 64 |

| External_Mu | Input | Sign/Verify | 64 |

| message strobe | Input | Sign/Verify | 1 |

| ctx size | Input | Sign/Verify | 1 |

| ctx | Input | Sign/Verify | 255 (+1) |

| pk | Input/Output | Keygen/Verify | 2592 |

| signature | Input/Output | Sign/Verify | 4627 (+1) |

| sk_out (software only) | Output | Keygen | 4896 |

| sk_in | Input | Signing | 4896 |

| Interrupt | Output | All | 520 |

| --------------------------- | --------------- | --------------- | ------------- |

| Total | 18440 |

name

Read-only register consists of the name of component.

version

Read-only register consists of the version of component.

CTRL

The control register consists of the following flags:

| Bits | Identifier | Access | Reset | Decoded | Name |

|---|---|---|---|---|---|

| [31:7] | - | - | - | - | |

| [6] | STREAM_MSG | w | 0x0 | - | |

| [5] | EXTERNAL_MU | w | 0x0 | - | |

| [4] | PCR_SIGN | w | 0x0 | - | |

| [3] | ZEROIZE | w | 0x0 | - | |

| [2:0] | CTRL | w | 0x0 | - |

CTRL

CTRL command field contains two bits indicating:

- Ctrl = 0b000

No Operation.

- Ctrl = 0b001

Trigs the core to start the initialization and perform keygen operation.

- Ctrl = 0b010

Trigs the core to start the signing operation for a message block.

- Ctrl = 0b011

Trigs the core to start verifying a signature for a message block.

- Ctrl = 0b100

Trigs the core to start the keygen+signing operation for a message block. This mode decreases storage costs for the secret key (SK) by recalling keygen and using an on-the-fly SK during the signing process.

ZEROIZE

Zeroize all internal registers: Zeroize all internal registers after process to avoid SCA leakage.

Firmware write generates only a single-cycle pulse on the hardware interface and then will be erased.

Zeroization operation requires 1,408 clock cycles to clear the SRAMs. Firmware must query for the ready status bit to be asserted before issuing another command.

PCR_SIGN

Run PCR Signing flow: Run MLDSA KeyGen+Signing flow to sign PCRs.

EXTERNAL_MU

Enable External_Mu Mode. (this mode is hard turned off for now.) The External_mu variant of ML-DSA modifies the standard signing and verifying process by allowing the precomputed mu to be externally provided instead of being internally derived from the message and public key. In this variant, the signing procedure accepts mu as an explicit input, making it suitable for environments where mu is generated offline for efficiency. While the core signing and verifying algorithm remains unchanged, the message input register is ignored in this mode.

STREAM_MSG

Enable streaming message mode.

In this mode, the controller will wait until it requires the message data and will assert the MSG_STREAM_READY bit in the status register. Once MSG_STREAM_READY is observed, the user should first set MSG_STROBE to 0xF.

The user can then write the message, one dword at a time, by writing to dword 0 of the message register. If the last dword is partial, the user must set the MSG_STROBE register to appropriately indicate the valid bytes. If the message is dword-aligned, a value of 0x0 must be written to the MSG_STROBE register to indicate the last dword, followed by a dummy write to the message register.

The flow must be terminated by writing to the message register after setting the MSG_STROBE to a non 0xF value. No partial dwords are allowed before the last dword indication. MSG_STROBE only needs to be programmed before the stream of full dwords, and before the final dword. Valid values of MSG_STROBE include 4'b1111, 4'b0111, 4'b0011, 4'b0001, and 4'b0000.

status

The read-only status register consists of the following flags:

| Bits | Identifier | Access | Reset | Decoded | Name |

|---|---|---|---|---|---|

| [31:3] | - | - | - | - | |

| [2] | MSG_STREAM_READY | r | 0x0 | - | |

| [1] | VALID | r | 0x0 | - | |

| [0] | READY | r | 0x0 | - |

READY

Indicates if the core is ready to process the inputs.

VALID

Indicates if the process is computed and the output is valid.

MSG_STREAM_READY

Indicates if the core is ready to process the message.

entropy

Entropy is required for SCA countermeasures to randomize the inputs with no change in the outputs. The entropy can be any 512-bit value in [0 : 2^512-1].

The ML-DSA-87 countermeasure requires several random vectors to randomize the intermediate values. An internal mechanism is considered to take one random vector of 512-bit (i.e., entropy register) and generate the required random vectors for different countermeasures.

seed

Adams Bridge component seed register type definition 8 32-bit registers storing the 256-bit seed for keygen. The seed can be any 256-bit value in [0 : 2^256-1].

sign_rnd

This register is used to support both deterministic and hedge variants of ML-DSA. The content of this register is the only difference between the deterministic and hedged variant of ML-DSA.

- In the “hedged” variant, sign_rnd is the output of an RBG.

- In the “deterministic” variant, sign_rnd is a 256-bit string consisting entirely of zeroes.

message

When not in streaming message mode, this architecture supports PureML-DSA defined by NIST with an empty ctx. When streaming message mode is enabled, this field is ignored except for dword 0 which is used to stream in the message.

verification result

To mitigate a possible fault attack on Boolean flag verification result, a 64-byte register is considered. Firmware is responsible for comparing the computed result with a certain segment of signature (segment c~), and if they are equal the signature is valid.

msg strobe

A 4-bit indication of enabled bytes in the next dword of the streamed message. Users must first program this to 0xF after observing MSG_STREAM_READY, unless the message is less than 1 dword. If the final dword is partial, MSG_STROBE must be programmed appropriately before writing the final bytes. Dword aligned messages must program MSG_STROBE to 0x0 to indicate the message is done being streamed.

ctx size

A 8-bit indication of the size in bytes of the ctx to be used.

ctx

This register stores the ctx field. It is applied only during streaming message mode.

sk_out

This register stores the private key for keygen if seed is given by software. This register can be read by ML-DSA user, i.e., software, after keygen operation.

If seed comes from the key vault, this register will not contain the private key to avoid exposing secret assets to software.

sk_in

This register stores the private key for signing. This register should be set before signing operation.

pk

ML-DSA component public key register type definition storing the public key. This register can be read by Adams Bridge user after keygen operation, or be set before verifying operation.

signature

ML-DSA component signature register type definition storing the signature of the message. This register is read by Adams Bridge user after signing operation, or be set before verifying operation.

Pseudocode

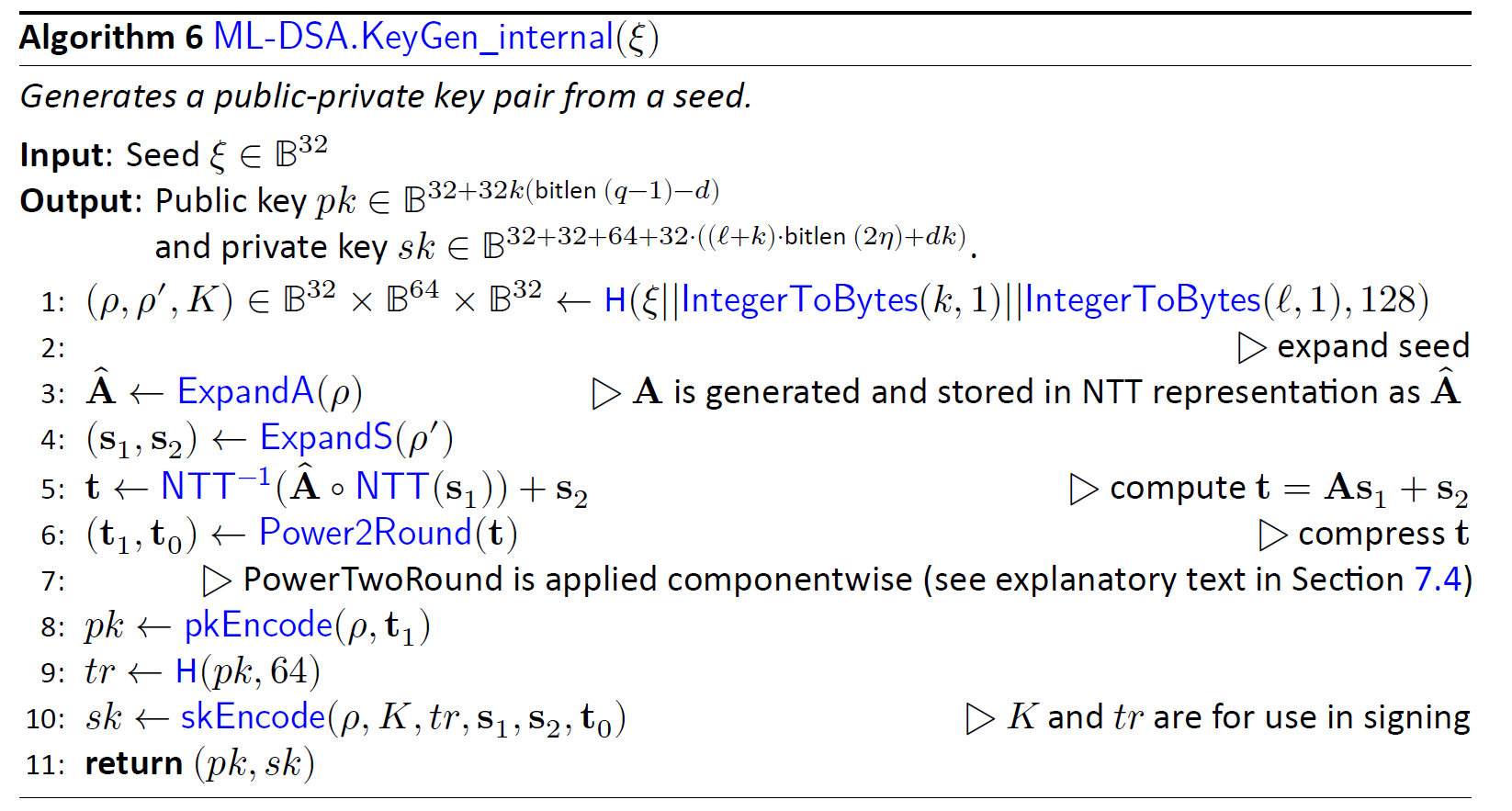

Keygen

Input:

seed

entropy

Output:

sk_out

pk

// Wait for the core to be ready (STATUS flag should be 2'b01 or 2'b11)

read_data = 0

while read_data == 0:

read_data = read(ADDR_STATUS)

// Feed the required inputs

write(ADDR_SEED, seed)

write(ADDR_ENTROPY, entropy)

// Trigger the core for performing Keygen

write(ADDR_CTRL, KEYGEN_CMD) // (STATUS flag will be changed to 2'b00)

// Wait for the core to be ready and valid (STATUS flag should be 2'b11)

read_data = 0

while read_data == 0:

read_data = read(ADDR_STATUS)

// Reading the outputs

sk_out = read(ADDR_SK)

pk = read(ADDR_PK)

// Return the outputs

return sk_out, pk

Signing

Input:

msg

sk_in

sign_rnd

entropy

Output:

signature

// Wait for the core to be ready (STATUS flag should be 2'b01 or 2'b11)

read_data = 0;

while (read_data == 0) {

read_data = read(ADDR_STATUS);

}

// Feed the required inputs

write(ADDR_MSG, msg);

write(ADDR_SK_IN, sk_in);

write(ADDR_SIGN_RND, sign_rnd);

write(ADDR_ENTROPY, entropy);

// Trigger the core for performing Signing

write(ADDR_CTRL, SIGN_CMD); // (STATUS flag will be changed to 2'b00)

// Wait for the core to be ready and valid (STATUS flag should be 2'b11)

read_data = 0;

while (read_data == 0) {

read_data = read(ADDR_STATUS);

}

// Reading the outputs

signature = read(ADDR_SIGNATURE);

// Return the output (signature)

return signature;

Verifying

Input:

msg

pk

signature

Output:

verification_result

// Wait for the core to be ready (STATUS flag should be 2'b01 or 2'b11)

read_data = 0;

while (read_data == 0) {

read_data = read(ADDR_STATUS);

}

// Feed the required inputs

write(ADDR_MSG, msg);

write(ADDR_PK, pk);

write(ADDR_SIGNATURE, signature);

// Trigger the core for performing Verifying

write(ADDR_CTRL, VERIFY_CMD); // (STATUS flag will be changed to 2'b00)

// Wait for the core to be ready and valid (STATUS flag should be 2'b11)

read_data = 0;

while (read_data == 0) {

read_data = read(ADDR_STATUS);

}

// Reading the outputs

verification_result = read(ADDR_VERIFICATION_RESULT);

// Return the output (verification_result)

return verification_result;

Keygen + Signing

This mode decreases storage costs for the secret key (SK) by recalling keygen and using an on-the-fly SK during the signing process.

Input:

seed

msg

sign_rnd

entropy

Output:

signature

// Wait for the core to be ready (STATUS flag should be 2'b01 or 2'b11)

read_data = 0;

while (read_data == 0) {

read_data = read(ADDR_STATUS);

}

// Feed the required inputs

write(ADDR_SEED, seed);

write(ADDR_MSG, msg);

write(ADDR_SIGN_RND, sign_rnd);

write(ADDR_ENTROPY, entropy);

// Trigger the core for performing Keygen + Signing

write(ADDR_CTRL, KEYGEN_SIGN_CMD); // (STATUS flag will be changed to 2'b00)

// Wait for the core to be ready and valid (STATUS flag should be 2'b11)

read_data = 0;

while (read_data == 0) {

read_data = read(ADDR_STATUS);

}

// Reading the outputs

signature = read(ADDR_SIGNATURE);

// Return the output (signature)

return signature;

Performance and Area Results

ML-DSA-87

The performance results for two operational frequencies, 400 MHz and 600 MHz, are presented in terms of latency (clock cycles [CCs]), time [ms], and performance [IOPS] as follows:

| Freq [MHz] | 400 | 600 | ||||

|---|---|---|---|---|---|---|

| "Unprotected" | Latency [CC] | Time [ms] | Performance [IOPS] | Time [ms] | Performance [IOPS] | |

| Keygen | 15,600 | 0.039 | 25,641 | 0.026 | 38,462 | |

| Signing (1 round) | 26,600 | 0.067 | 15,038 | 0.044 | 22,556 | |

| Signing (Ave) | 106,400 | 0.266 | 3,759 | 0.177 | 5,639 | |

| Verifying | 18,800 | 0.047 | 21,277 | 0.031 | 31,915 |

NOTE: Masking and shuffling countermeasures are integrated into the architecture and there is a work-in-progress to make it configureble to be enabled or disabled at synthesis time.

The area overhead associated with enabling these countermeasures is as follows:

| Freq [MHz] | 400 | 600 | ||||

|---|---|---|---|---|---|---|

| "Protected" | Latency [CC] | Time [ms] | Performance [IOPS] | Time [ms] | Performance [IOPS] | |

| keygen | 15,600 | 0.039 | 25,641 | 0.026 | 38,462 | |

| Signing (1 round) | 36,700 | 0.092 | 10,899 | 0.061 | 16,349 | |

| Signing (Ave) | 146,800 | 0.367 | 2,725 | 0.245 | 4,087 | |

| Verifying | 18,800 | 0.047 | 21,277 | 0.031 | 31,915 |

-

CNSA 2.0 only allows the highest security level (Level-5) for PQC which is ML-DSA-87, and Adams Bridge only supports ML-DSA-87 parameter set.

-

The requried area for the unprotected ML-DSA-87 is 0.0366mm2 @5nm:

- 0.0146mm2 for stdcell

- 0.0220mm2 for ram area for 57.38 KB memory.

-

The requried area for the protected ML-DSA-87 is 0.114mm2 @5nm:

- 0.0921mm2 for stdcell

- 0.0220mm2 for ram area for 57.38 KB memory.

-

The design is converging today at 600MHz at low, med & high voltage corners. (We have noticed the design converging to 1 GHz on a latest process node.)

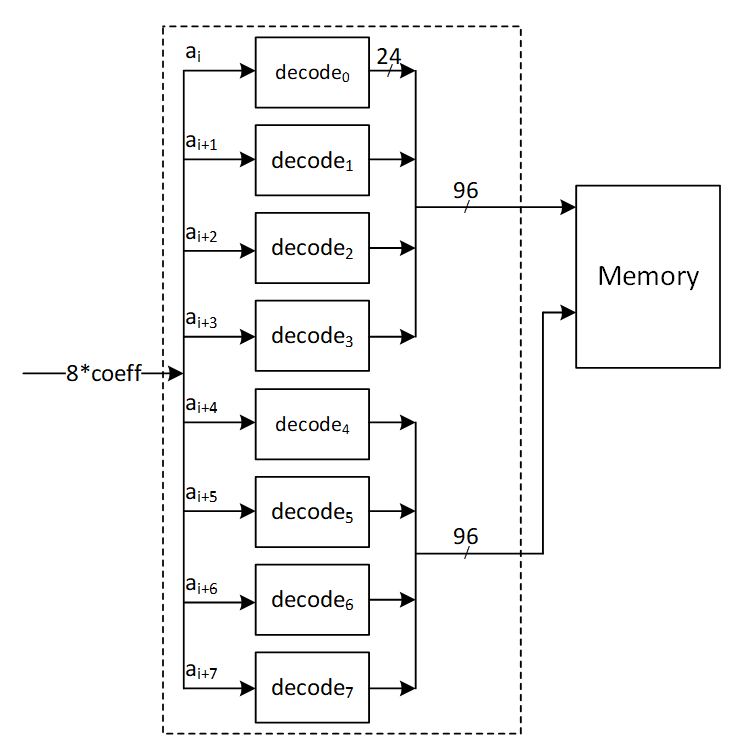

Memory requirement

The following table shows the required memory instances for ML-DSA-87:

| Instance | Depth | Data Width | Strobe Width |

|---|---|---|---|

| mldsa_top.mldsa_ctrl_inst.mldsa_sk_ram_bank0 | 596 | 32 | |

| mldsa_top.mldsa_ctrl_inst.mldsa_sk_ram_bank1 | 596 | 32 | |

| mldsa_top.mldsa_w1_mem_inst | 512 | 4 | |

| mldsa_top.mldsa_ram_inst0_bank0 | 832 | 96 | |

| mldsa_top.mldsa_ram_inst0_bank1 | 832 | 96 | |

| mldsa_top.mldsa_ram_inst1 | 576 | 96 | |

| mldsa_top.mldsa_ram_inst2 | 1408 | 96 | |

| mldsa_top.mldsa_ram_inst3 | 128 | 96 | |

| mldsa_top.mldsa_ctrl_inst.mldsa_sig_z_ram | 224 | 160 | 8 |

| mldsa_top.mldsa_ctrl_inst.mldsa_pubkey_ram | 64 | 320 | 8 |

All memories are modeled as 1 read 1 write port RAMs with a flopped read data. See abr_1r1w_ram.sv and abr_1r1w_be_ram.sv for examples.

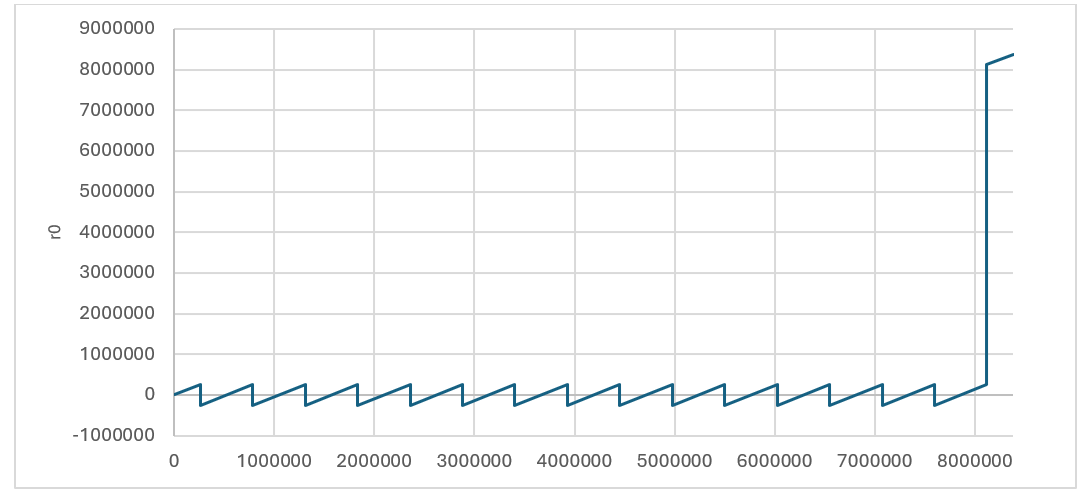

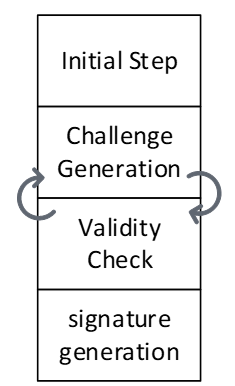

Signing perofrmance

The signing operation is the most time-consuming part of the MLDSA algorithm. However, it is not constant-time due to the inherent nature of ML-DSA. The signing process involves a loop that continues until all validity checks are passed. The number of iterations depends on the provided privkey, message, and sign_rnd.

According to FIPS 204 recommendations, there is no mechanism to interrupt the signing loop. Nevertheless, for the ML-DSA-87 parameter set, the average number of required loops is 3.85.

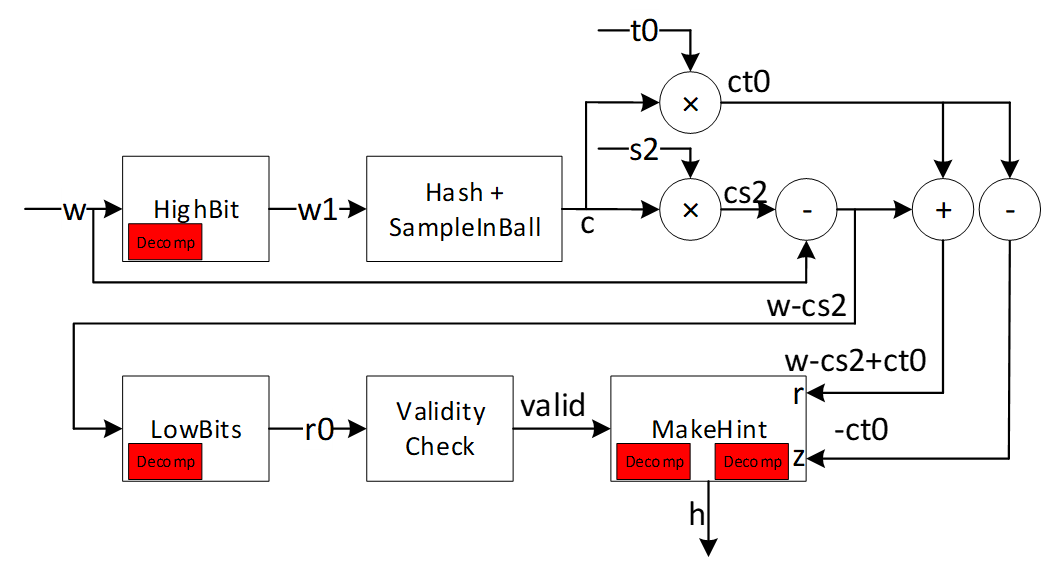

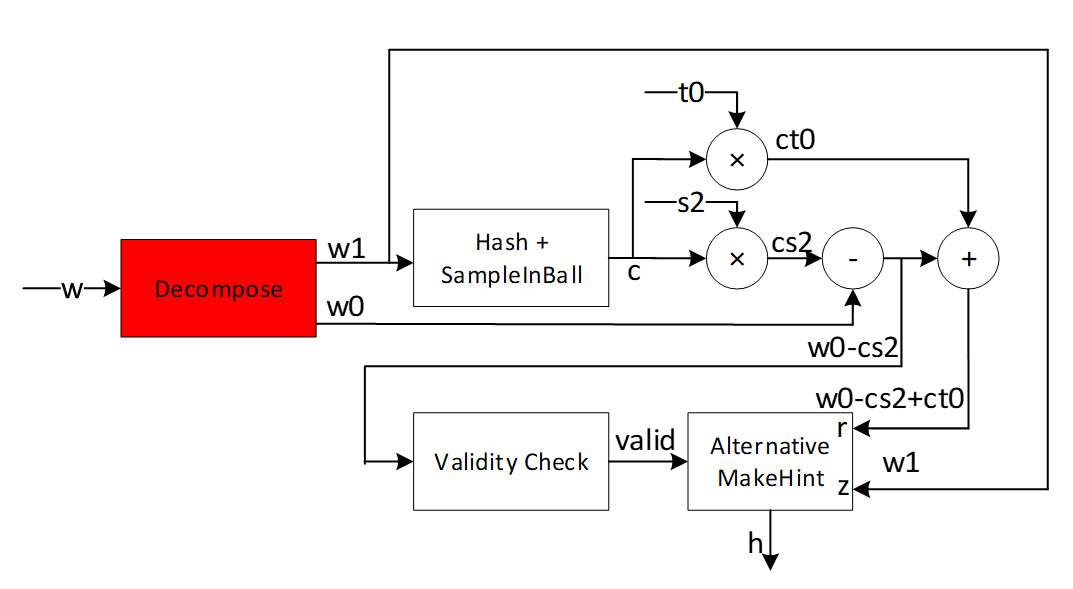

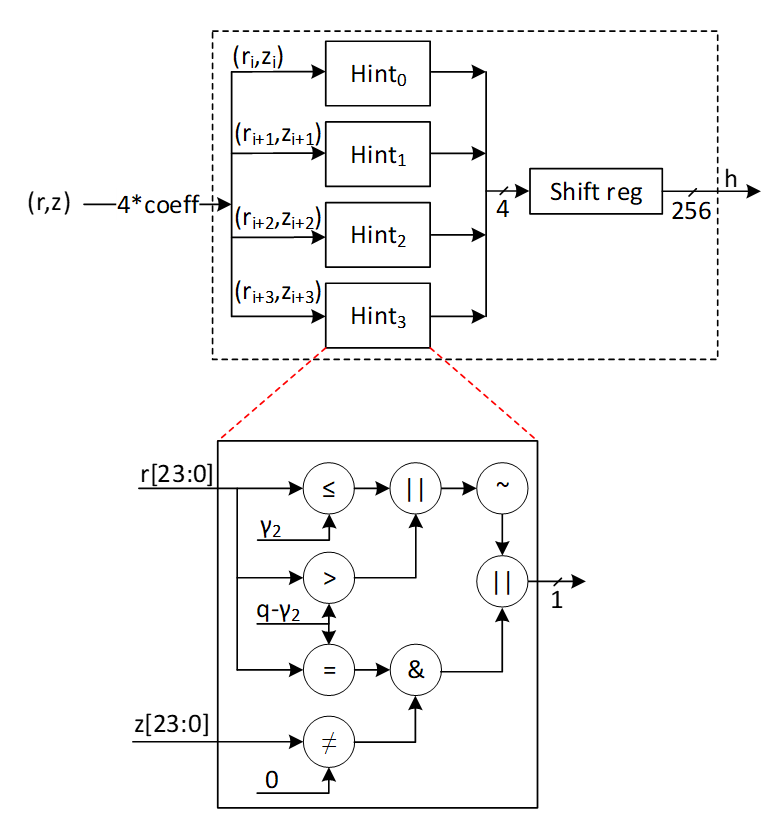

Proposed architecture

The value of k and l is determined based on the security level of the system defined by NIST as follows:

| Algorithm Name | Security Level | k | l |

|---|---|---|---|

| ML-DSA-87 | Level-5 | 8 | 7 |

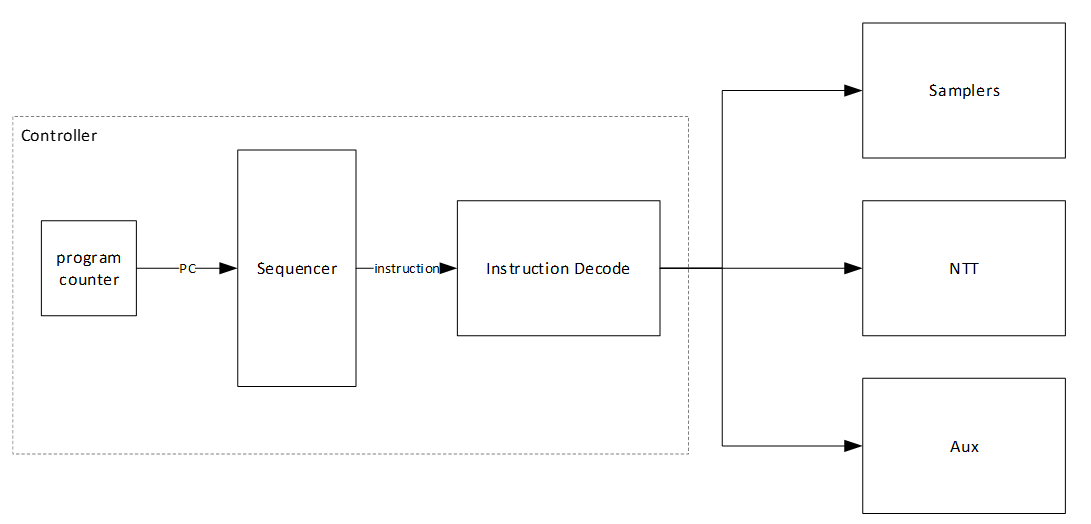

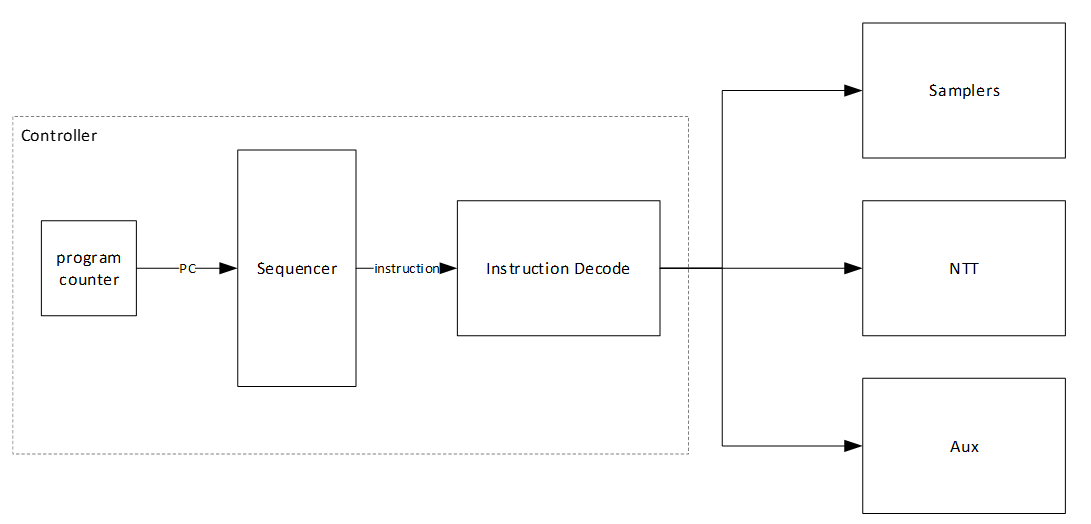

In the hardware design, using an instruction-set processor yields a smaller, simpler, and more controllable design. By fine-tuning hardware acceleration, we achieve efficiency without excessive logic overhead. We implement all computation blocks in hardware while maintaining flexibility for future extensions. This adaptability proves crucial in a rapidly evolving field like post-quantum cryptography (PQC), even amidst existing HW architectures.

The Customized Instruction-Set Cryptography Engine is designed to provide efficient cryptographic operations while allowing flexibility for changes in NIST ML-DSA standards and varying security levels. This proposal outlines the architecture, instruction set design, sequencer functionality, and hardware considerations for the proposed architecture. This architecture is typically implemented as an Intellectual Property (IP) core within an FPGA or ASIC, featuring a pipelined design for streamlined execution and interfaces for seamless communication with the host processor.

In our proposed architecture, we define specific instructions for various submodules, including SHAKE256, SHAKE128, NTT, INTT, etc. Each instruction is associated with an opcode and operands. By customizing these instructions, we can tailor the engine's behavior to different security levels.

To execute the required instructions, a high-level controller acts as a sequencer, orchestrating a precise sequence of operations. Within the architecture, several memory blocks are accessible to submodules. However, it's the sequencer's responsibility to provide the necessary memory addresses for each operation. Additionally, the sequencer handles instruction fetching, decoding, operand retrieval, and overall data flow management.

The high-level architecture of Adams Bridge controller is illustrated as follows:

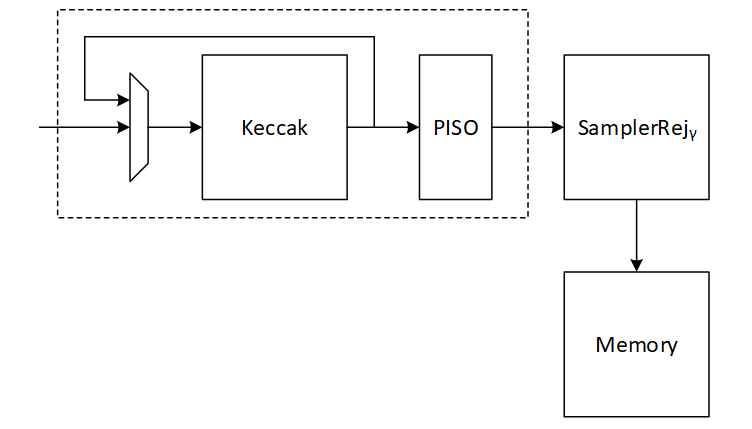

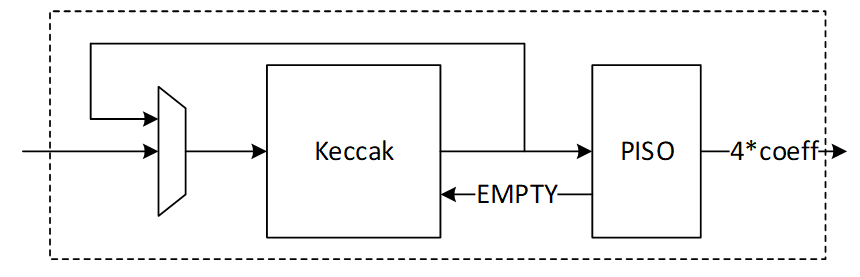

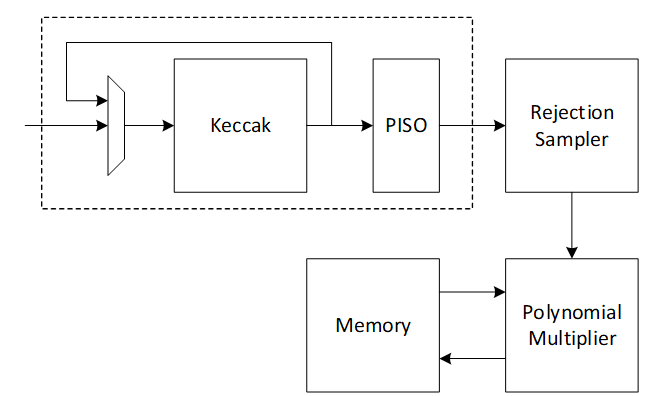

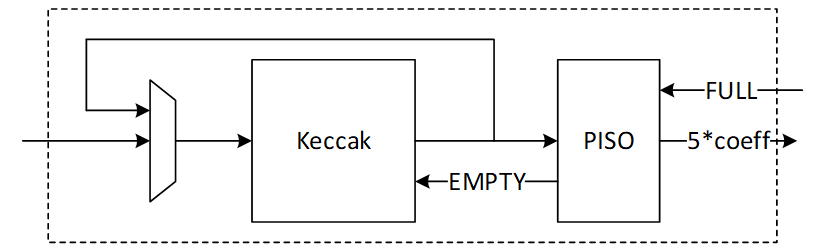

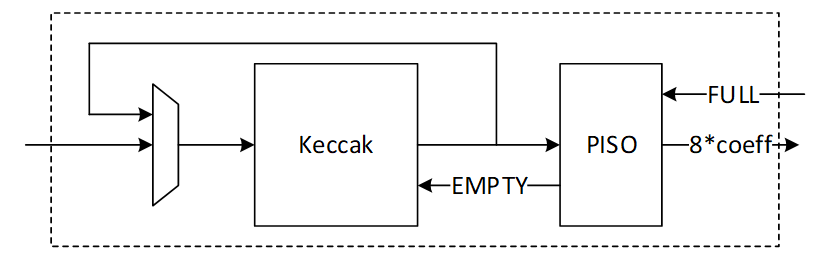

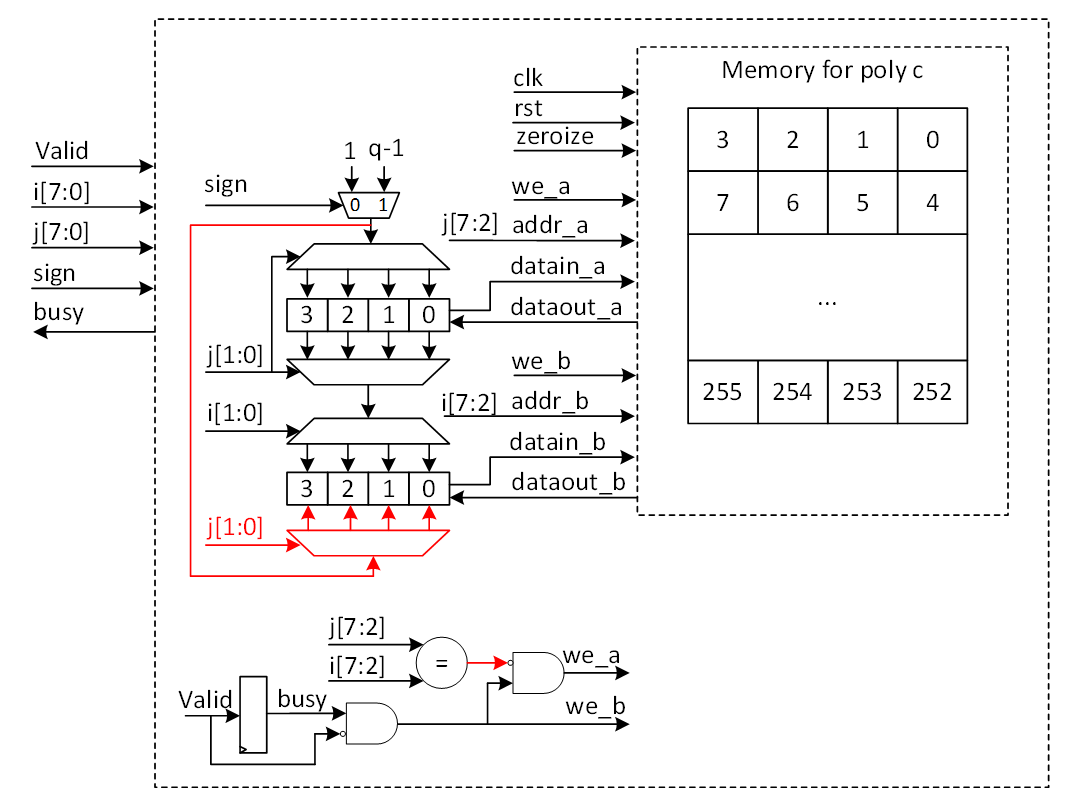

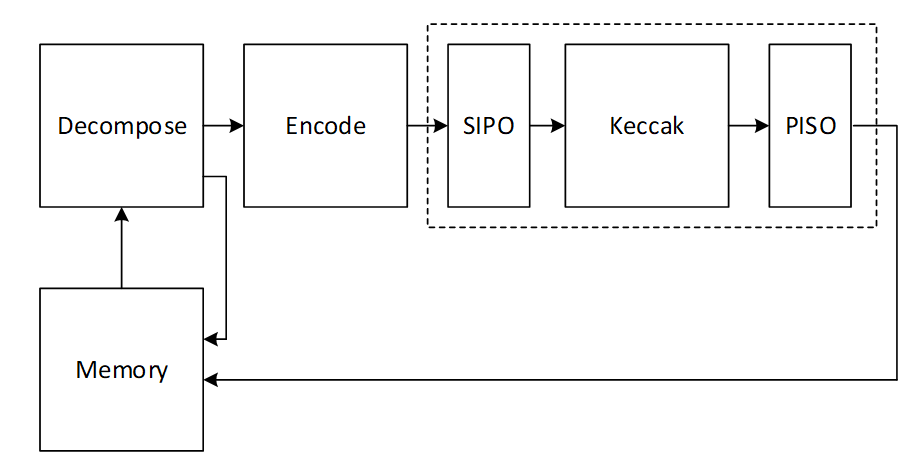

Keccak architecture

Hashing operation takes a significant portion of PQC latency. All samplers need to be fed by hashing functions. i.e., SHAKE128 and SHAKE256. Therefore, to improve the efficiency of the implementation, one should increase efficiency on the Keccak core, providing higher throughput using fewer hardware resources. Keccak core requires 24 rounds of the sponge function computation. We develop a dedicated SIPO (serial-in, parallel-out) and PISO (parallel-in, serial-out) for interfacing with this core in its input and output, respectively.

We propose an approach to design hardware rejection sampling architecture, which can offer more efficiency. This approach enables us to cascade the Keccak unit to rejection sampler and polynomial multiplication units that results in avoiding the memory access.

In our optimized architecture, to reduce the failure probability due to the non-deterministic pattern of rejection sampling and avoid any stall cycle in polynomial multiplication, we use a FIFO to store sampled coefficients that match the speed of polynomial multiplication. The proposed sampler works in parallel with the Keccak core. Therefore, the latency for sampling unit is absorbed within the latency for a concurrently running Keccak core.

In the input side, there are two different situations:

- The given block from SIPO is the last absorbing round.

In this situation, the output PISO buffer should receive the Keccak state. - The given block from SIPO is not the last absorbing round.

In this situation, the output PISO buffer should not receive the Keccak state. However, the next input block from SIPO needs to be XORed with the Keccak state.

There are two possible scenarios when the Keccak state has to be saved in the PISO buffer on the output side:

- PISO buffer EMPTY flag is not set.

In this situation, Keccak should hold on and maintain the current state until EMPTY is activated and transfer the state into PISO buffer. - PISO buffer EMPTY flag is set.

In this situation, the state can be transferred to PISO buffer and the following round of Keccak (if any) can be started.

PISO Buffer

The output of the Keccak unit is used to feed four different samplers at varying data rates. The Parallel in Serial out buffer is a generic buffer that can take the full width of the Keccak state and deliver it to the sampler units at the appropriate data rates.

Input data from the Keccak can come at 1088 or 1344 bits per clock. During expand mask operation, the buffer needs to be written from a write pointer while valid data remains in the buffer. All other modes only require writing the full Keccak state into the buffer when it is empty.

Output data rate varies - 32 bits for RejBounded and SampleInBall, 80 bits for Expand Mask and 120 bits for SampleRejq.

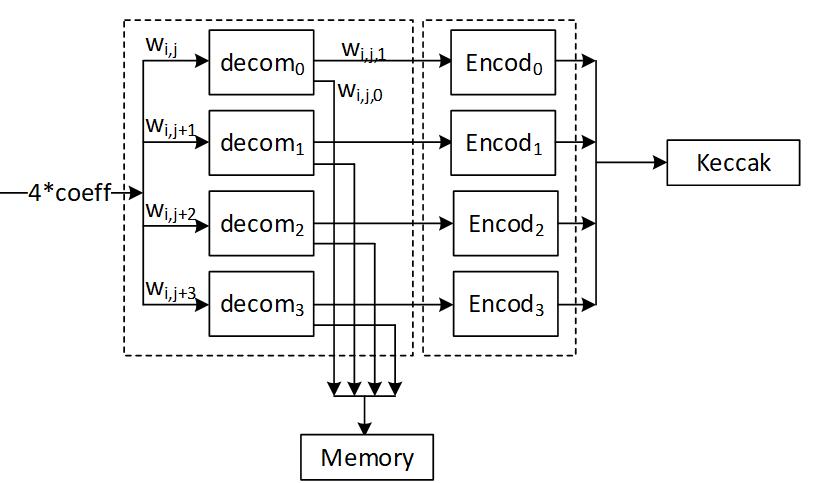

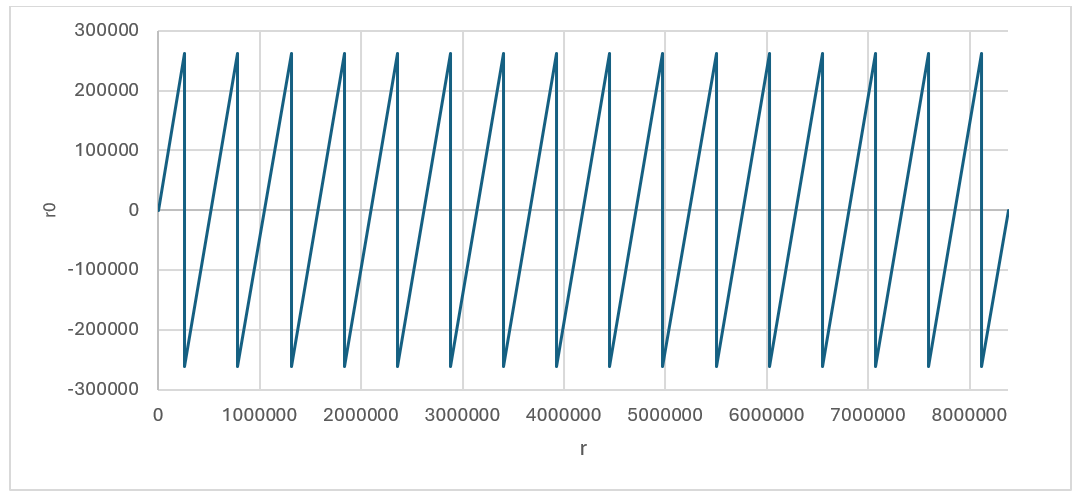

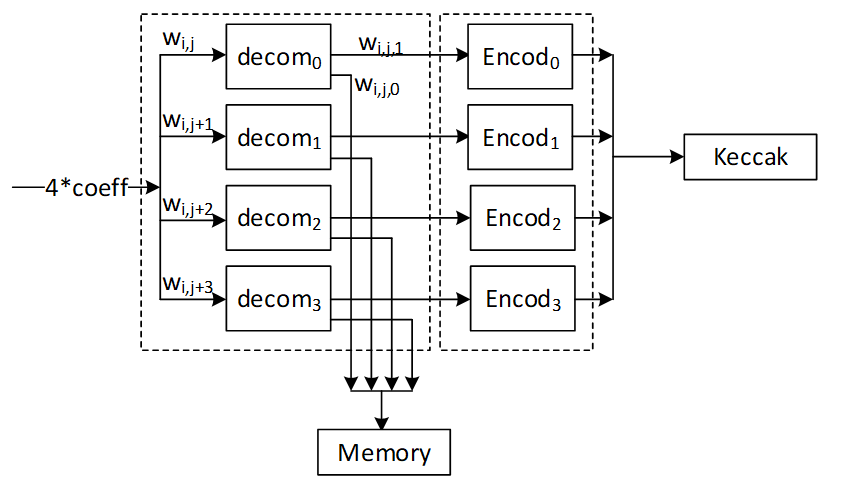

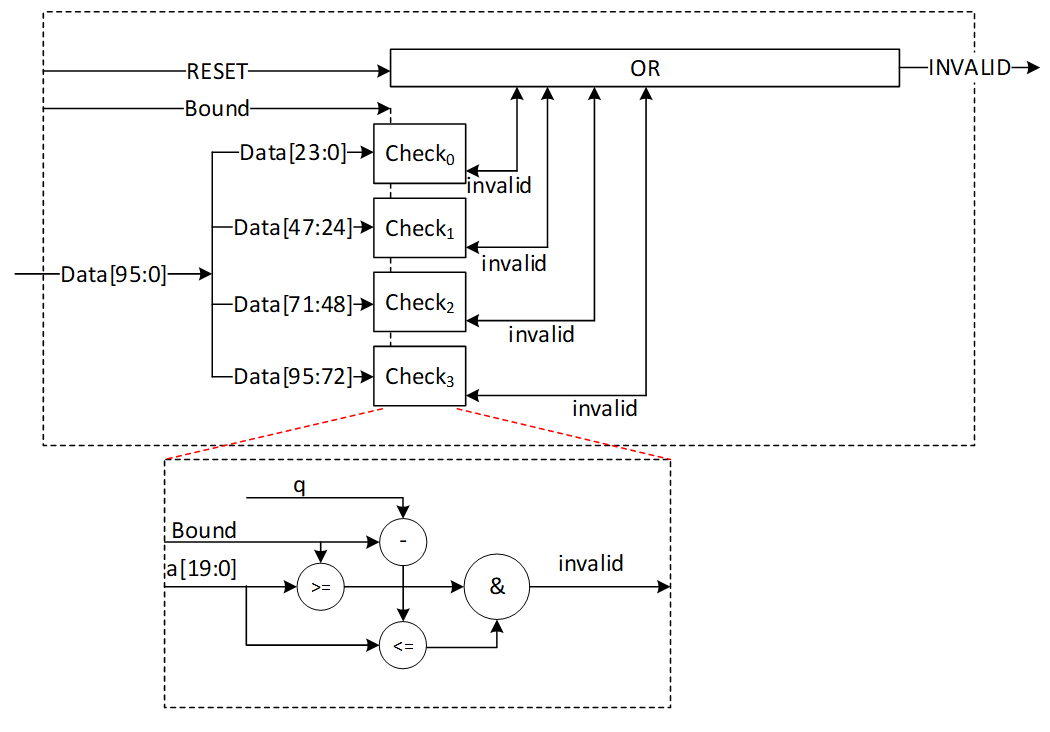

Expand Mask architecture

Dilithium samples the polynomials that make up the vectors and matrices independently, using a fixed seed value and a nonce value that increases the security as the input for Keccak. Keccak is used to take these seed and nonce and generate random stream bits.

Expand Mask takes γ-bits (20-bit for ML-DSA-87) and samples a vector such that all coefficients are in range of [-γ+1, γ]. It continues to sample all required coefficients, n=256, for a polynomial.

After sampling a polynomial with 256 coefficients, nonce will be changed and a new random stream will be generated using Keccak core and will be sampled by expand mask unit.

The output of this operation results in a l different polynomial while each polynomial includes 256 coefficients.

y1,0 y1,l-1

Expand Mask is used in signing operation of Dilithium. The output of expand mask sampler is stored into memory and will be used as an input for NTT module.

We propose an architecture to remove the cost of memory access since NTT needs input in a specific format, i.e., 4 coefficients per each memory address. To achieve this, we need to have a balanced throughput between all these modules to avoid large buffering or conflict between them.

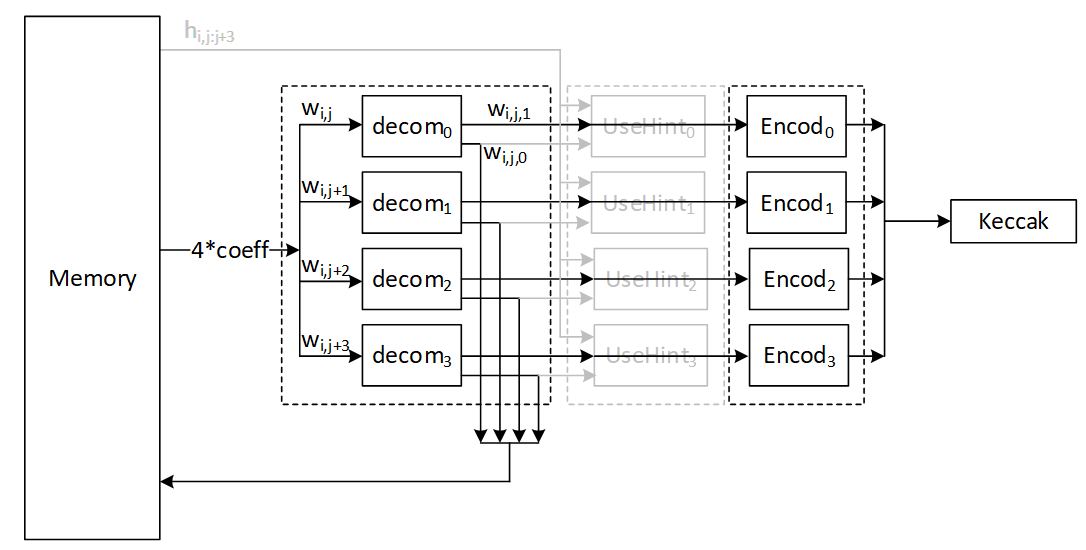

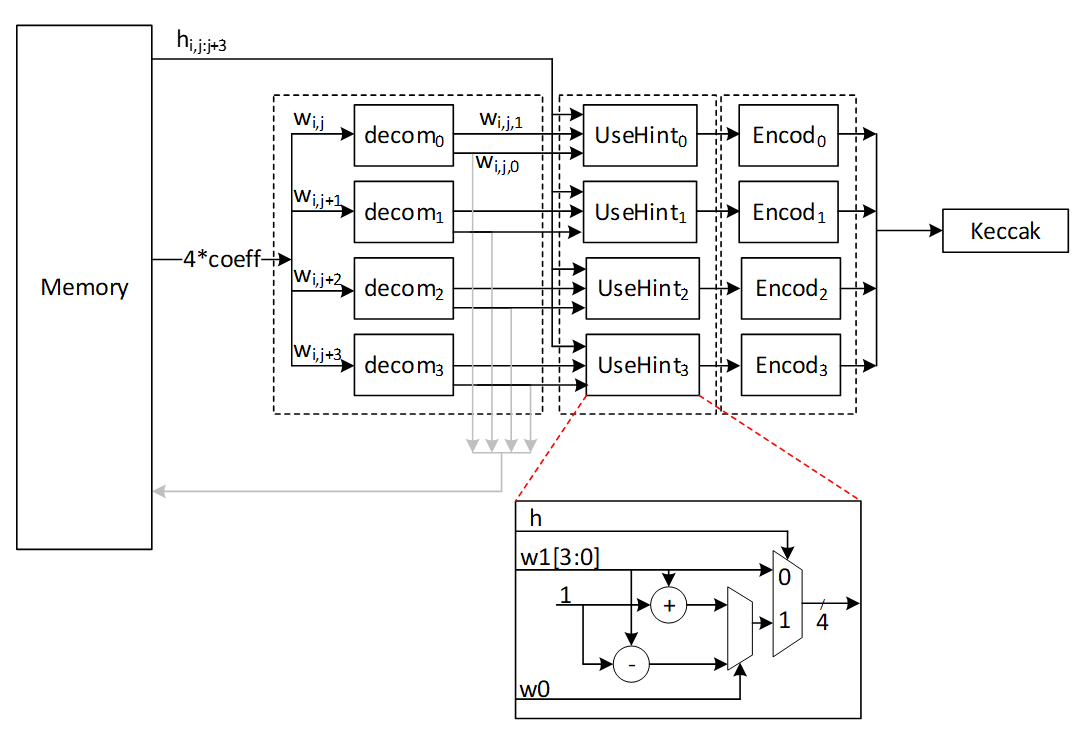

High-level architecture is illustrated as follows:

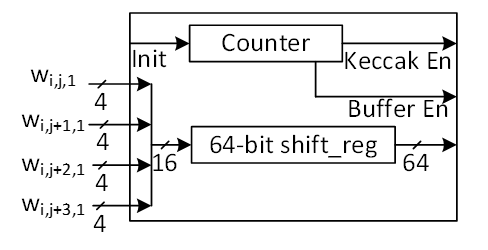

Keccak interface to Expand Mask

Keccak is used in SHAKE-256 configuration for expand mask operation. Hence, it will take the input data and generate 1088-bit output after each round. We propose implementing of Keccak while each round takes 12 cycles. The format of input data is as follows:

Input data = ρ' | n

Where ρ' is seed with 512-bits, n=κ+r is the 16-bit nonce that will be incremented for each polynomial (r++) or if the signature is rejected by validity checks (++).

Since each bits (20-bit in for ML-DSA-87) is used for one coefficient, 256*20=5120 bits are required for one polynomial which needs 5 rounds (5120/1088=4.7) of Keccak.

To sample l polynomial (l=7 for ML-DSA-87), we need a total of 5*7 = 35 rounds of Keccak.

There are two paths for Keccak input. While the input can be set by controller for new nonce in the case of next polynomial or rejected signature, the loop path is used to rerun Keccak for completing the current sampling process with l polynomial.

Expand mask cannot take all 1088-bit output parallelly since it makes hardware architecture too costly and complex, and also there is no other input from Keccak for the next 12 cycles. Therefore, we propose a parallel-input serial-output (PISO) unit in between to store the Keccak output and feed rejection unit sequentially.

Expand Mask

This unit takes data from the output of SHAKE-256 stored in a PISO buffer. The required cycles for this unit is 4.7 rounds of Keccak for one polynomial and 35 rounds of Keccak for all required polynomial (l polynomial which l=7 for ML-DSA-87).

In our optimized architecture, this unit works in parallel with the Keccak core. Therefore, the latency for expand mask is absorbed within the latency for a concurrently running Keccak core.

Our proposed NTT unit takes four coefficients per cycle from one memory address. It helps to avoid memory access challenges and make the control logic too complicated. This implies that the optimal speed of the expand mask module is to sample four coefficients per cycle.

There are 4 rejection sampler circuits corresponding to each 20-bit input. The total coefficient after each round of Keccak is 1088/20 = 54.4 coefficients. We keep expand mask unit working in all cycles and generating 4 coefficients per cycle without any interrupt. That means 12*4=48 coefficients can be processed during each Keccak round.

After 12 cycles, 48 coefficients are processed by the expand mask unit, and there are still 128-bit inside PISO. To maximize the utilization factor of our hardware resources, Keccak core will check the PISO status. If PISO contains 4 coefficients or more (the required inputs for expand mask unit), EMPTY flag will not be set, and Keccak will wait until the next cycle. Hence, expand mask unit takes 13 cycles to process 52 coefficients, and the last 48-bit will be combined with the next Keccak round to be processed.

Performance of Expand Mask

Sampling a polynomial with 256 coefficients takes 256/4=64 cycles. The first round of Keccak needs 12 cycles, and the rest of Keccak operation will be parallel with expand mask operation.

For a complete expand mask for Dilithium ML-DSA-87 with 7 polynomials, 7*64+12=460 cycles are required using sequential operation. However, our design can be duplicated to enable parallel sampling for two different polynomials. Having two parallel design results in 268 cycles, while three parallel design results in 204 cycles at the cost of more resource utilization.

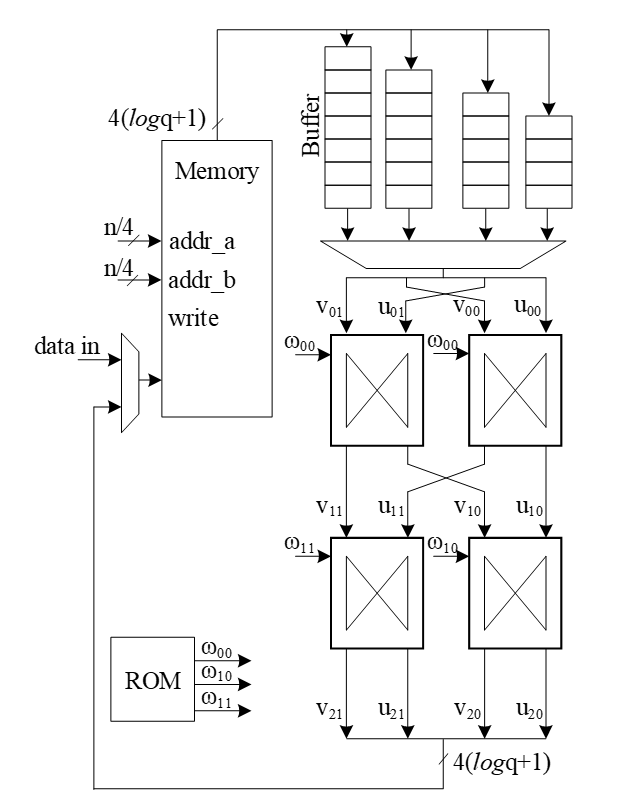

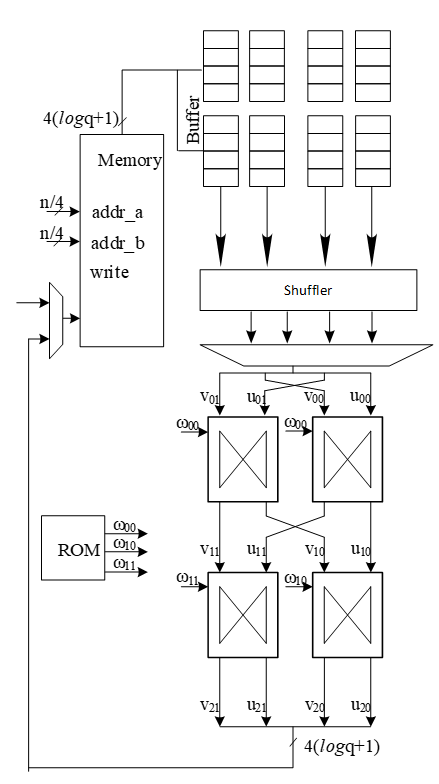

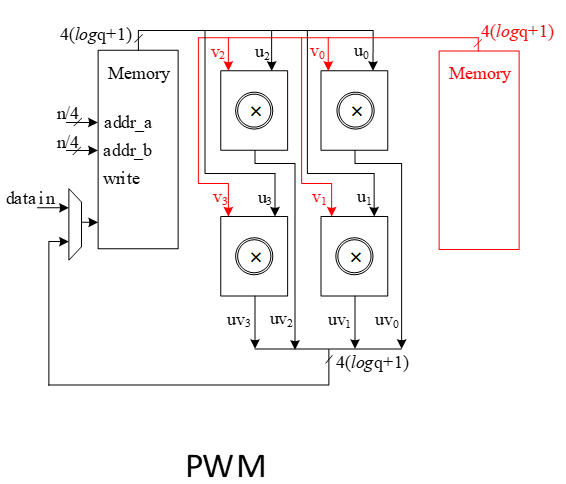

NTT architecture

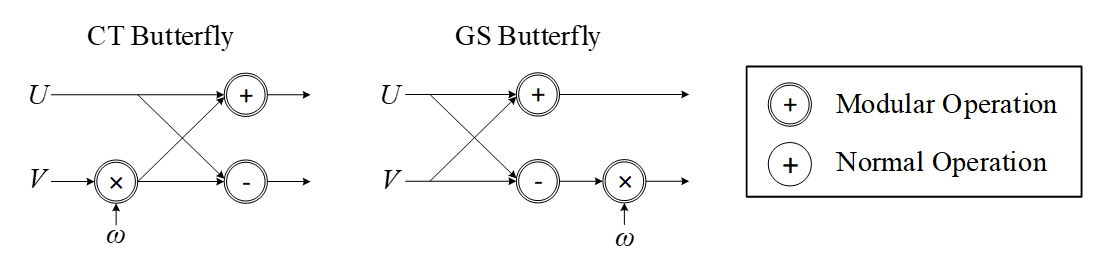

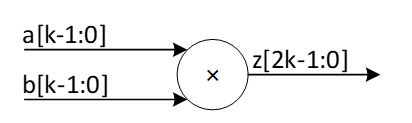

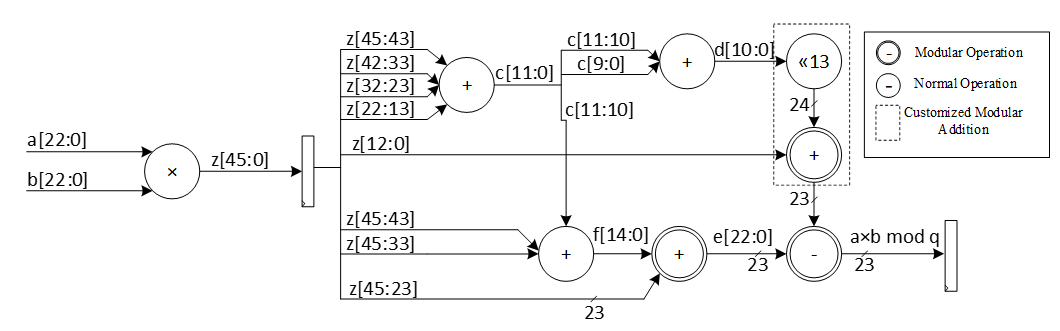

The most computationally intensive operation in lattice-based PQC schemes is polynomial multiplication which can be accelerated using NTT. However, NTT is still a performance bottleneck in lattice-based cryptography. We propose improved NTT architecture with highly efficient modular reduction. This architecture supports NTT, INTT, and point-wise multiplication (PWM) to enhance the design from resource sharing point-of-view while reducing the pre-processing cost of NTT and post-processing cost of INTT.

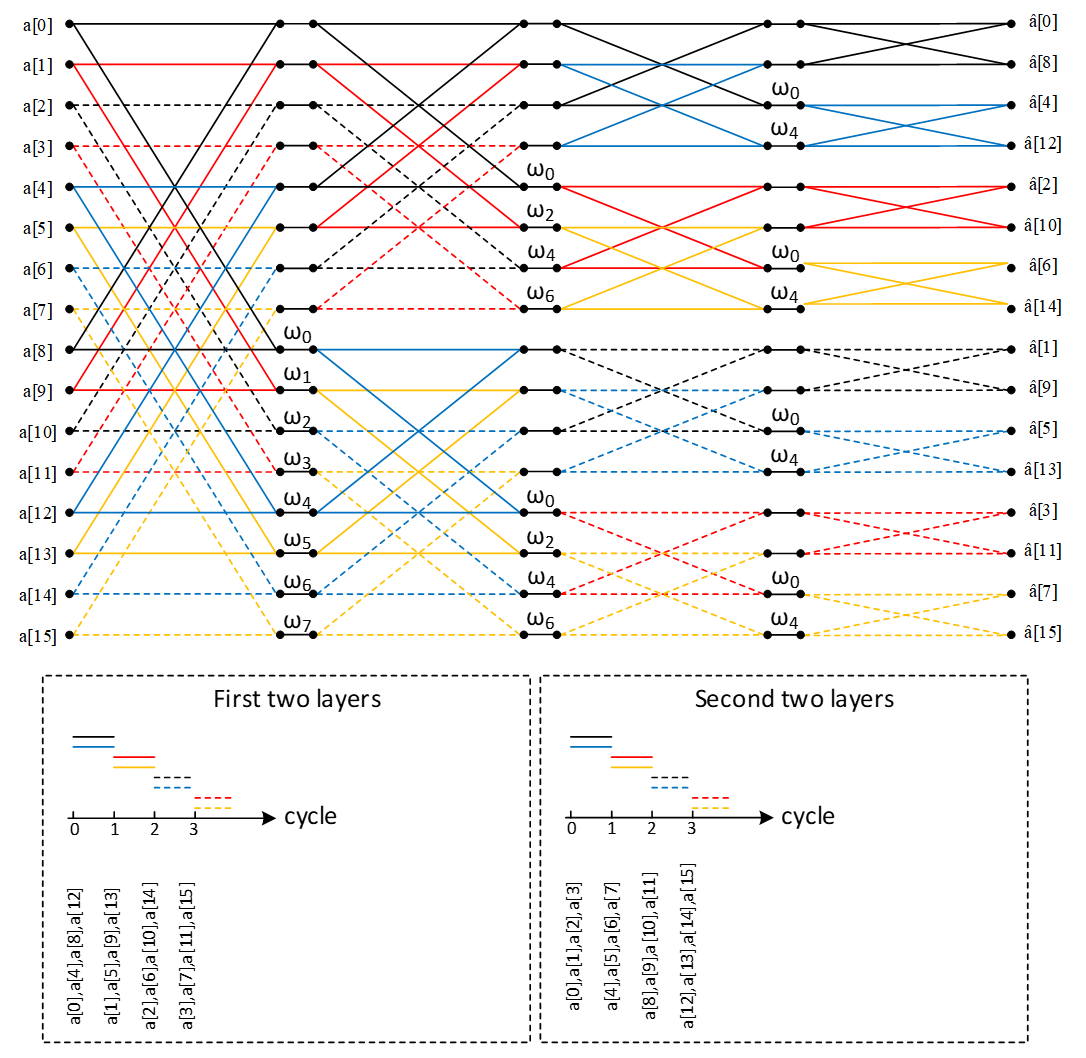

Our NTT architecture exploits a merged-layer NTT technique using two pipelined stages with two parallel cores in each stage level, making 4 butterfly cores in total. Our proposed parallel pipelined butterfly cores enable us to perform Radix-4 NTT/INTT operation with 4 parallel coefficients. While memory bandwidth limits the efficiency of the butterfly operation, we use a specific memory pattern to store four coefficients per address.

An NTT operation can be regarded as an iterative operation by applying a sequence of butterfly operations on the input polynomial coefficients. A butterfly operation is an arithmetic operation that combines two coefficients to obtain two outputs. By repeating this process for different pairs of coefficients, the NTT operation can be computed in a logarithmic number of steps.

Cooley-Tukey (CT) and Gentleman-Sande (GS) butterfly configurations can be used to facilitate NTT/INTT computation. The bit-reverse function reverses the bits of the coefficient index. However, the bit-reverse permutation can be skipped by using CT butterfly for NTT and GS for INTT.

We propose a merged NTT architecture using dual radix-4 design by employing four pipelined stages with two parallel cores at each stage level.

The following presents the high-level architecture of our proposed NTT to take advantage of Merged architectural design:

The following figure illustrates a 16-point NTT data flow based on our proposed architecture:

A merged-layer NTT technique uses two pipelined stages with two parallel cores in each stage level, making 4 butterfly cores in total. The parallel pipelined butterfly cores enable us to perform Radix-4 NTT/INTT operation with 4 parallel coefficients.

However, NTT requires a specific memory pattern that may limit the efficiency of the butterfly operation. For Dilithium use case, there are n=256 coefficients per polynomial that requires log n=8 layers of NTT operations. Each butterfly unit takes two coefficients while difference between the indexes is 28-i in ith stage. That means for the first stage, the given indexes for each butterfly unit are (k, k+128):

Stage 1 input indexes: {(0, 128), {1, 129), (2, 130), …, (127, 255)}

Stage 2 input indexes: {(0, 64), {1, 65), (2, 66), …, (63, 127), (128, 192), (129, 193), …, (191, 255)}

…

Stage 8 input indexes: {(0, 1), {2, 3), (4, 5), …, (254, 255)}

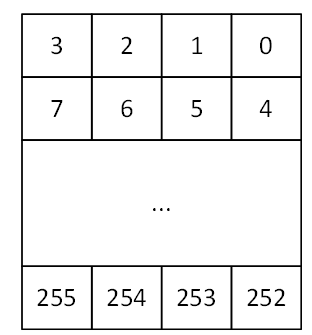

There are several considerations for that:

- We need access to 4 coefficients per cycle to match the throughput into 2×2 butterfly units.

- An optimized architecture provides a memory with only one reading port, and one writing port.

- Based on the previous two notes, each memory address contains 4 coefficients.

- The initial coefficients are produced sequentially by Keccak and samplers. Specifically, they begin with 0 and continue incrementally up to 255. Hence, at the very beginning cycle, the memory contains (0, 1, 2, 3) in the first address, (4, 5, 6, 7) in second address, and so on.

- The cost of in-place memory relocation to align the memory content is not negligible. Particularly, it needs to be repeated for each stage.

While memory bandwidth limits the efficiency of the butterfly operation, we use a specific memory pattern to store four coefficients per address.

We propose a pipeline architecture the read memory in a particular pattern and using a set of buffers, the corresponding coefficients are fed into NTT block.

The initial memory contains the indexes as follows:

| Address | Initial Memory Content | |||

|---|---|---|---|---|

| 0 | 0 | 1 | 2 | 3 |

| 1 | 4 | 5 | 6 | 7 |

| 2 | 8 | 9 | 10 | 11 |

| 3 | 12 | 13 | 14 | 15 |

| 4 | 16 | 17 | 18 | 19 |

| 5 | 20 | 21 | 22 | 23 |

| 6 | 24 | 25 | 26 | 27 |

| 7 | 28 | 29 | 30 | 31 |

| 8 | 32 | 33 | 34 | 35 |

| 9 | 36 | 37 | 38 | 39 |

| 10 | 40 | 41 | 42 | 43 |

| 11 | 44 | 45 | 46 | 47 |

| 12 | 48 | 49 | 50 | 51 |

| 13 | 52 | 53 | 54 | 55 |

| 14 | 56 | 57 | 58 | 59 |

| 15 | 60 | 61 | 62 | 63 |

| 16 | 64 | 65 | 66 | 67 |

| 17 | 68 | 69 | 70 | 71 |

| 18 | 72 | 73 | 74 | 75 |

| 19 | 76 | 77 | 78 | 79 |

| 20 | 80 | 81 | 82 | 83 |

| 21 | 84 | 85 | 86 | 87 |

| 22 | 88 | 89 | 90 | 91 |

| 23 | 92 | 93 | 94 | 95 |

| 24 | 96 | 97 | 98 | 99 |

| 25 | 100 | 101 | 102 | 103 |

| 26 | 104 | 105 | 106 | 107 |

| 27 | 108 | 109 | 110 | 111 |

| 28 | 112 | 113 | 114 | 115 |

| 29 | 116 | 117 | 118 | 119 |

| 30 | 120 | 121 | 122 | 123 |

| 31 | 124 | 125 | 126 | 127 |

| 32 | 128 | 129 | 130 | 131 |

| 33 | 132 | 133 | 134 | 135 |

| 34 | 136 | 137 | 138 | 139 |

| 35 | 140 | 141 | 142 | 143 |

| 36 | 144 | 145 | 146 | 147 |

| 37 | 148 | 149 | 150 | 151 |

| 38 | 152 | 153 | 154 | 155 |

| 39 | 156 | 157 | 158 | 159 |

| 40 | 160 | 161 | 162 | 163 |

| 41 | 164 | 165 | 166 | 167 |

| 42 | 168 | 169 | 170 | 171 |

| 43 | 172 | 173 | 174 | 175 |

| 44 | 176 | 177 | 178 | 179 |

| 45 | 180 | 181 | 182 | 183 |

| 46 | 184 | 185 | 186 | 187 |

| 47 | 188 | 189 | 190 | 191 |

| 48 | 192 | 193 | 194 | 195 |

| 49 | 196 | 197 | 198 | 199 |

| 50 | 200 | 201 | 202 | 203 |

| 51 | 204 | 205 | 206 | 207 |

| 52 | 208 | 209 | 210 | 211 |

| 53 | 212 | 213 | 214 | 215 |

| 54 | 216 | 217 | 218 | 219 |

| 55 | 220 | 221 | 222 | 223 |

| 56 | 224 | 225 | 226 | 227 |

| 57 | 228 | 229 | 230 | 231 |

| 58 | 232 | 233 | 234 | 235 |

| 59 | 236 | 237 | 238 | 239 |

| 60 | 240 | 241 | 242 | 243 |

| 61 | 244 | 245 | 246 | 247 |

| 62 | 248 | 249 | 250 | 251 |

| 63 | 252 | 253 | 254 | 255 |

Suppose memory is read in this pattern:

Addr: 0, 16, 32, 48, 1, 17, 33, 49, …, 15, 31, 47, 63

The input goes to the customized buffer architecture as follows:

| 0 | → | |||||||

|---|---|---|---|---|---|---|---|---|

| 1 | ||||||||

| 2 | ||||||||

| 3 |

Cycle 0 reading address 0

| 64 | → | 0 | ||||||

|---|---|---|---|---|---|---|---|---|

| 65 | 1 | |||||||

| 66 | 2 | |||||||

| 67 | 3 |

Cycle 1 reading address 16

| 128 | → | 64 | 0 | |||||

|---|---|---|---|---|---|---|---|---|

| 129 | 65 | 1 | ||||||

| 130 | 66 | 2 | ||||||

| 131 | 67 | 3 |

Cycle 2 reading address 32

| 192 | → | 128 | 64 | 0 | ||||

|---|---|---|---|---|---|---|---|---|

| 193 | 129 | 65 | 1 | |||||

| 194 | 130 | 66 | 2 | |||||

| 195 | 131 | 67 | 3 |

Cycle 3 reading address 48

| 4 | → | 192 | 128 | 64 | 0 | |||

|---|---|---|---|---|---|---|---|---|

| 5 | 193 | 129 | 65 | 1 | ||||

| 6 | 194 | 130 | 66 | 2 | ||||

| 7 | 195 | 131 | 67 | 3 |

Cycle 4 reading address 1

| 68 | → | 4 | 192 | 128 | 64 | |||

|---|---|---|---|---|---|---|---|---|

| 69 | 5 | 193 | 129 | 65 | 1 | |||

| 70 | 6 | 194 | 130 | 66 | 2 | |||

| 71 | 7 | 195 | 131 | 67 | 3 |

Cycle 5 reading address 17

The highlighted value in the first buffer contains the required coefficients for our butterfly units, i.e., (0, 128) and (64, 192). Since we merged the 1 and second layers of NTT stages, the output of the first parallel butterfly units need to exchange one coefficient and then the required input for the second parallel set of butterfly units is ready, i.e., (0, 64) and (128, 192).

After completing the first round of operation including NTT stage 1 and 2, the memory contains the following data:

| Address | Memory Content after 1&2 stages | |||

|---|---|---|---|---|

| 0 | 0 | 64 | 128 | 192 |

| 1 | 1 | 65 | 129 | 193 |

| 2 | 2 | 66 | 130 | 194 |

| 3 | 3 | 67 | 131 | 195 |

| 4 | 4 | 68 | 132 | 196 |

| 5 | 5 | 69 | 133 | 197 |

| 6 | 6 | 70 | 134 | 198 |

| 7 | 7 | 71 | 135 | 199 |

| 8 | 8 | 72 | 136 | 200 |

| 9 | 9 | 73 | 137 | 201 |

| 10 | 10 | 74 | 138 | 202 |

| 11 | 11 | 75 | 139 | 203 |

| 12 | 12 | 76 | 140 | 204 |

| 13 | 13 | 77 | 141 | 205 |

| 14 | 14 | 78 | 142 | 206 |

| 15 | 15 | 79 | 143 | 207 |

| 16 | 16 | 80 | 144 | 208 |

| 17 | 17 | 81 | 145 | 209 |

| 18 | 18 | 82 | 146 | 210 |

| 19 | 19 | 83 | 147 | 211 |

| 20 | 20 | 84 | 148 | 212 |

| 21 | 21 | 85 | 149 | 213 |

| 22 | 22 | 86 | 150 | 214 |

| 23 | 23 | 87 | 151 | 215 |

| 24 | 24 | 88 | 152 | 216 |

| 25 | 25 | 89 | 153 | 217 |

| 26 | 26 | 90 | 154 | 218 |

| 27 | 27 | 91 | 155 | 219 |

| 28 | 28 | 92 | 156 | 220 |

| 29 | 29 | 93 | 157 | 221 |

| 30 | 30 | 94 | 158 | 222 |

| 31 | 31 | 95 | 159 | 223 |

| 32 | 32 | 96 | 160 | 224 |

| 33 | 33 | 97 | 161 | 225 |

| 34 | 34 | 98 | 162 | 226 |

| 35 | 35 | 99 | 163 | 227 |

| 36 | 36 | 100 | 164 | 228 |

| 37 | 37 | 101 | 165 | 229 |

| 38 | 38 | 102 | 166 | 230 |

| 39 | 39 | 103 | 167 | 231 |

| 40 | 40 | 104 | 168 | 232 |

| 41 | 41 | 105 | 169 | 233 |

| 42 | 42 | 106 | 170 | 234 |

| 43 | 43 | 107 | 171 | 235 |

| 44 | 44 | 108 | 172 | 236 |

| 45 | 45 | 109 | 173 | 237 |

| 46 | 46 | 110 | 174 | 238 |

| 47 | 47 | 111 | 175 | 239 |

| 48 | 48 | 112 | 176 | 240 |

| 49 | 49 | 113 | 177 | 241 |

| 50 | 50 | 114 | 178 | 242 |

| 51 | 51 | 115 | 179 | 243 |

| 52 | 52 | 116 | 180 | 244 |

| 53 | 53 | 117 | 181 | 245 |

| 54 | 54 | 118 | 182 | 246 |

| 55 | 55 | 119 | 183 | 247 |

| 56 | 56 | 120 | 184 | 248 |

| 57 | 57 | 121 | 185 | 249 |

| 58 | 58 | 122 | 186 | 250 |

| 59 | 59 | 123 | 187 | 251 |

| 60 | 60 | 124 | 188 | 252 |

| 61 | 61 | 125 | 189 | 253 |

| 62 | 62 | 126 | 190 | 254 |

| 63 | 63 | 127 | 191 | 255 |

The same process can be applied in the next round to perform NTT stage 3 and 4.

| 0 | → | |||||||

|---|---|---|---|---|---|---|---|---|

| 64 | ||||||||

| 128 | ||||||||

| 192 |

Cycle 0 reading address 0

| 16 | → | 0 | ||||||

|---|---|---|---|---|---|---|---|---|

| 80 | 64 | |||||||

| 144 | 128 | |||||||

| 208 | 192 |

Cycle 1 reading address 16

| 32 | → | 16 | 0 | |||||

|---|---|---|---|---|---|---|---|---|

| 96 | 80 | 64 | ||||||

| 160 | 144 | 128 | ||||||

| 224 | 208 | 192 |

Cycle 2 reading address 32

| 48 | → | 32 | 16 | 0 | ||||

|---|---|---|---|---|---|---|---|---|

| 112 | 96 | 80 | 64 | |||||

| 176 | 160 | 144 | 128 | |||||

| 240 | 224 | 208 | 192 |

Cycle 3 reading address 48

| 1 | → | 48 | 32 | 16 | 0 | |||

|---|---|---|---|---|---|---|---|---|

| 65 | 112 | 96 | 80 | 64 | ||||

| 129 | 176 | 160 | 144 | 128 | ||||

| 193 | 240 | 224 | 208 | 192 |

Cycle 4 reading address 1

| 17 | → | 1 | 48 | 32 | 16 | |||

|---|---|---|---|---|---|---|---|---|

| 81 | 65 | 112 | 96 | 80 | 64 | |||

| 145 | 129 | 176 | 160 | 144 | 128 | |||

| 209 | 193 | 240 | 224 | 208 | 192 |

Cycle 5 reading address 17

After completing all stages, the memory contents would be as follows:

| Address | Memory Content after Stage 7&8 | |||

|---|---|---|---|---|

| 0 | 0 | 1 | 2 | 3 |

| 1 | 4 | 5 | 6 | 7 |

| 2 | 8 | 9 | 10 | 11 |

| 3 | 12 | 13 | 14 | 15 |

| 4 | 16 | 17 | 18 | 19 |

| 5 | 20 | 21 | 22 | 23 |

| 6 | 24 | 25 | 26 | 27 |

| 7 | 28 | 29 | 30 | 31 |

| 8 | 32 | 33 | 34 | 35 |

| 9 | 36 | 37 | 38 | 39 |

| 10 | 40 | 41 | 42 | 43 |

| 11 | 44 | 45 | 46 | 47 |

| 12 | 48 | 49 | 50 | 51 |

| 13 | 52 | 53 | 54 | 55 |

| 14 | 56 | 57 | 58 | 59 |

| 15 | 60 | 61 | 62 | 63 |

| 16 | 64 | 65 | 66 | 67 |

| 17 | 68 | 69 | 70 | 71 |

| 18 | 72 | 73 | 74 | 75 |

| 19 | 76 | 77 | 78 | 79 |

| 20 | 80 | 81 | 82 | 83 |

| 21 | 84 | 85 | 86 | 87 |

| 22 | 88 | 89 | 90 | 91 |

| 23 | 92 | 93 | 94 | 95 |

| 24 | 96 | 97 | 98 | 99 |

| 25 | 100 | 101 | 102 | 103 |

| 26 | 104 | 105 | 106 | 107 |

| 27 | 108 | 109 | 110 | 111 |

| 28 | 112 | 113 | 114 | 115 |

| 29 | 116 | 117 | 118 | 119 |

| 30 | 120 | 121 | 122 | 123 |

| 31 | 124 | 125 | 126 | 127 |

| 32 | 128 | 129 | 130 | 131 |

| 33 | 132 | 133 | 134 | 135 |

| 34 | 136 | 137 | 138 | 139 |

| 35 | 140 | 141 | 142 | 143 |

| 36 | 144 | 145 | 146 | 147 |

| 37 | 148 | 149 | 150 | 151 |

| 38 | 152 | 153 | 154 | 155 |

| 39 | 156 | 157 | 158 | 159 |

| 40 | 160 | 161 | 162 | 163 |

| 41 | 164 | 165 | 166 | 167 |

| 42 | 168 | 169 | 170 | 171 |

| 43 | 172 | 173 | 174 | 175 |

| 44 | 176 | 177 | 178 | 179 |

| 45 | 180 | 181 | 182 | 183 |

| 46 | 184 | 185 | 186 | 187 |

| 47 | 188 | 189 | 190 | 191 |

| 48 | 192 | 193 | 194 | 195 |

| 49 | 196 | 197 | 198 | 199 |

| 50 | 200 | 201 | 202 | 203 |

| 51 | 204 | 205 | 206 | 207 |

| 52 | 208 | 209 | 210 | 211 |

| 53 | 212 | 213 | 214 | 215 |

| 54 | 216 | 217 | 218 | 219 |

| 55 | 220 | 221 | 222 | 223 |

| 56 | 224 | 225 | 226 | 227 |

| 57 | 228 | 229 | 230 | 231 |

| 58 | 232 | 233 | 234 | 235 |

| 59 | 236 | 237 | 238 | 239 |

| 60 | 240 | 241 | 242 | 243 |

| 61 | 244 | 245 | 246 | 247 |

| 62 | 248 | 249 | 250 | 251 |

| 63 | 252 | 253 | 254 | 255 |

The proposed method saves the time needed for shuffling and reordering, while using only a little more memory.

With this memory access pattern, writes to the memory are in order (0, 1, 2, 3, etc) while reads wraparound with a step of 16 (0, 16, 32 48, 1, 17, 33, 49, etc). Hence, there will be a memory conflict where writes to addresses take place before the data is read and provided to the butterfly 2x2 module. To resolve this, a set of 3 base addresses are given to the NTT module – src address, interim address and dest address that belong to 3 separate sections in memory. The NTT module will access the memory with the appropriate base address for each round as follows:

| Round | Read base address | Write base address |

|---|---|---|

| 0 | src | interim |

| 1 | interim | dest |

| 2 | dest | interim |

| 3 | interim | dest |

At the end of NTT operation, results must be located in the section with the dest base address. This will also provide the benefit of preserving the original input for later use in keygen or signing operations. The same memory access pattern is followed for INTT operation as well. Note that Adam’s bridge controller may choose to make src and dest base addresses the same to save on memory usage, when original coefficients need not be preserved. In this case, the requirement is still to have a separate interim base address to avoid memory conflicts during NTT operation.

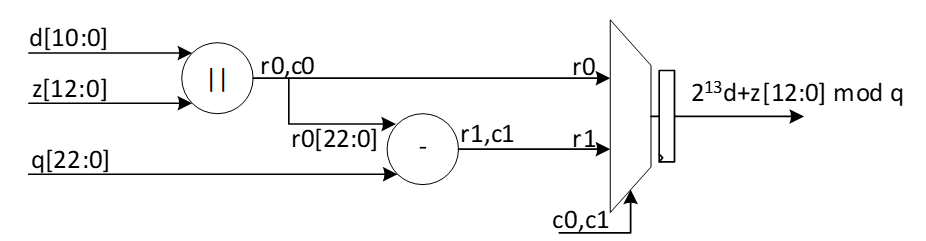

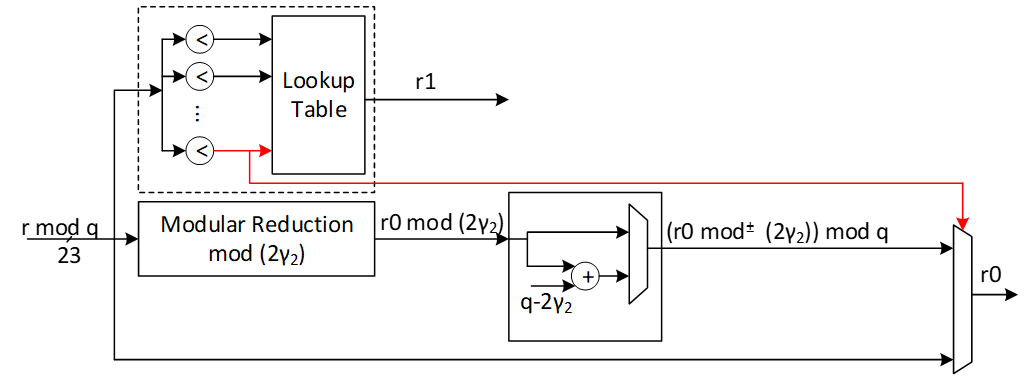

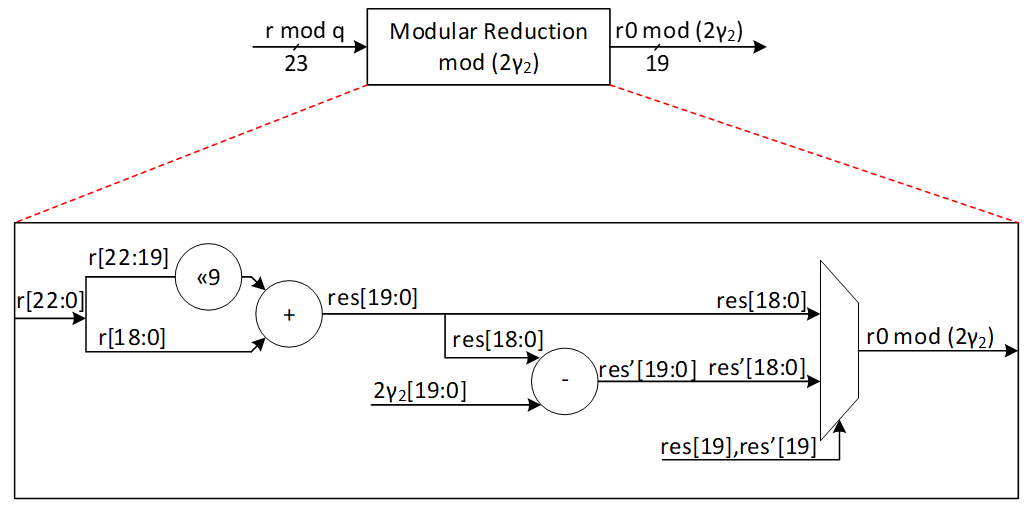

Modular Reduction in NTT

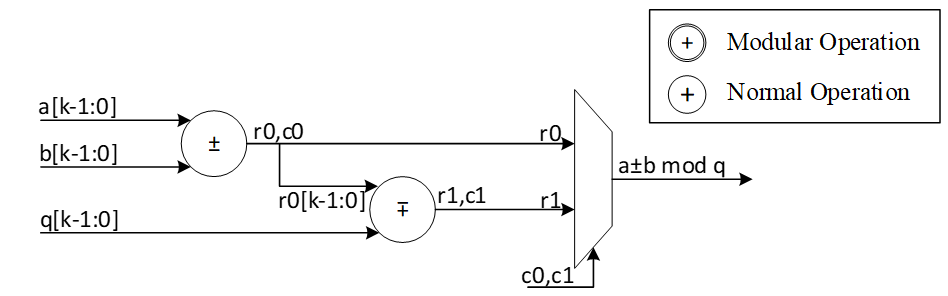

The modular addition/subtraction in hardware platform can be implemented by one additional subtraction/addition operations, as follows:

However, modular multiplication can be implemented using different techniques. The commonly used Barrett reduction and Montgomery reduction require additional multiplications and are more suitable for the non-specific modulus. Furthermore, Montgomery reduction needs two more steps to convert all inputs from normal domain to Montgomery domain and then convert back the results into normal domain. This conversation increases the latency of NTT operations and does not lead to the best performance. Hence, Barrett reduction and Montgomery reduction are expensive in time and hardware resources.

For Dilithium hardware accelerator, we can customize the reduction architecture based on the prime value of the scheme with q= 8,380,417 to design a hardware-friendly architecture that increase the efficiency of computation. The value of q can be presented by:

q=8,380,417=223-213+1

For the modular operation we have:

223=213-1 mod q

Suppose that all input operands are less than q, we have:

0≤a,b<q

z=a.b<q2=46'h3FE0_04FF_C001

Based on 223=213-1 mod q, we can rewrite the equation as follows:

z=223z45:23+z22:0=213z45:23-z45:23+z22:0=223z45:33+213z32:23-z45:23+z22:0=213z45:33-z45:33+213z32:23-z45:23+z22:0=223z45:43+213z42:33-z45:33+213z32:23-z45:23+z22:0=213z45:43-z45:43+213z42:33-z45:33+213z32:23-z45:23+z22:0=213z45:43+z42:33+z32:23+-z45:43-z45:33-z45:23+z22:0=213z45:43+z42:33+z32:23+z22:13+-z45:43-z45:33-z45:23+z12:0=213c-(z45:43+z45:33+z45:23)+z[12:0]

Where:

c=z45:43+z42:33+z32:23+z[22:13]<212

The value of c has 12 bits, and we can rewrite it as follows:

213c11:0=223c11:10+213c9:0=213c11:10-c11:10+213c9:0=213d-c11:10

d=c11:10+c9:0

So, the value of z mod q is as follows:

z=213c-z45:43+z45:33+z45:23+z12:0=213d+z12:0-z45:43+z45:33+z45:23+c11:10=213d+z12:0-e

Where:

e=z45:43+z45:33+z45:23+c11:10=f+z45:23 mod q

f[14:0]=z45:43+z45:33+c11:10

We use a modular addition for f+z45:23 to keep it less than q. This modular addition has one stage delay.

The addition between 213d and z12:0 can be implemented by concatenating since the first 13 bits of d are zero as follows:

g23:0=213d+z12:0=d10:0||z[12:0]

Since the result has more than 23 bits, we perform a modular addition to keep it less than q. For that, the regular modular addition will be replaced by the following architecture while c0=g23, r0=g[22:0]. In other words, c0=d10, r0[22:0]=d9:0||z[12:0]

The following figure shows the architecture of this reduction technique. As one can see, these functions do not need any multiplications in hardware and can be achieved by shifter and adder.

The modular multiplication is implemented with a 3-stage pipeline architecture. At the first pipeline stage, z=a·b is calculated. At the second pipeline stage, f+z[45:23] and 213d+z12:0 are calculated in parallel. At the third pipeline stage, a modular subtraction is executed to obtain the result and the result is output.

We do not need extra multiplications for our modular reduction, unlike Barrett and Montgomery algorithms. The operations of our reduction do not depend on the input data and do not leak any information. Our reduction using the modulus q= 8,380,417 is fast, efficient and constant-time.

Performance of NTT

For a complete NTT operation with 8 layers, i.e., n = 256, the proposed architecture takes 82=4 rounds. Each round involves 2564=64 operations in pipelined architecture. Hence, the latency of each round is equal to 64 (read from memory) + 8 (2 sequential butterfly latency) + 4 (input buffer latency) + 2 (wait between each two stages) = 78 cycles.

Round 0: stage 0 & 1

Round 1: stage 2 & 3

Round 2: stage 4 & 5

Round 3: stage 6 & 7

The total latency would be 4×78=312 cycles.

For a complete NTT/INTT operation for Dilithium ML-DSA-87 with 7 or 8 polynomials, 7*312=2184 or 8*312=2496 cycles are required. However, our design can be duplicated to enable parallel NTT for two different polynomials. Having two parallel design results in 1248 cycles.

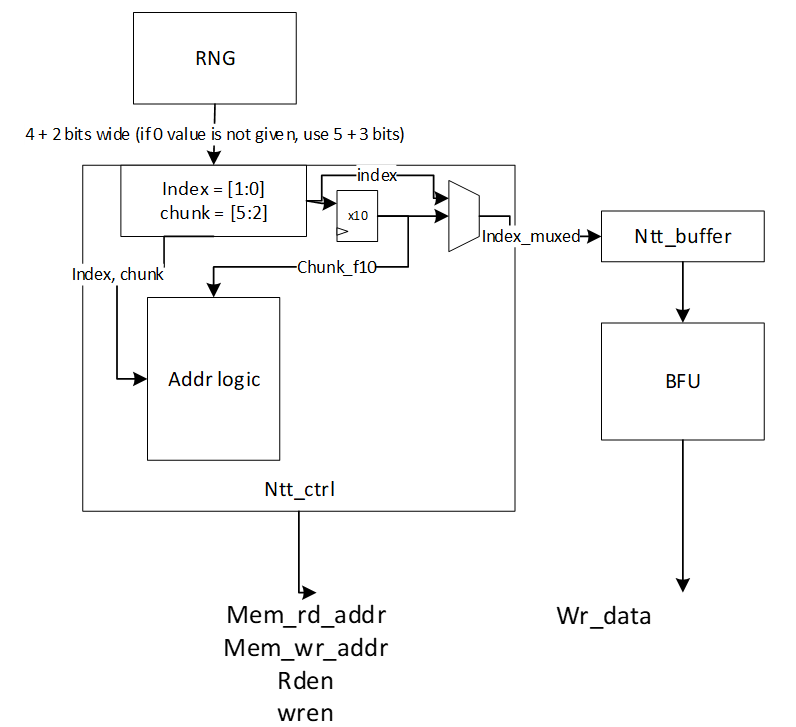

NTT shuffling countermeasure

To protect NTT, we have two options – shuffling the order of execution of coefficients and masking in-order computation such that NTT performs operation on two input shares per coefficient and produces two output shares. While masking is a strong countermeasure for side-channel attacks, it requires at least 4x the area and adds 4x the latency to one NTT operation. Shuffling is an implementation trick that can be used to provide randomization to some degree without area or latency overhead. In Adam’s Bridge, we employ a combination of both for protected design. One NTT core will have shuffling for both NTT and INTT modes. The second NTT core will have shuffling and masking on the first layer of computation for INTT mode with cascaded connection from a masked PWM module. In NTT mode, the second NTT core will employ only shuffling. PWM operations are masked by default. PWA and PWS operations are shuffled by default.

| Address | Memory Content | ||||

|---|---|---|---|---|---|

| 0 | 0 | 1 | 2 | 3 | |

| 1 | 4 | 5 | 6 | 7 | |

| 2 | 8 | 9 | 10 | 11 | |

| 3 | 12 | 13 | 14 | 15 | |

| 4 | 16 | 17 | 18 | 19 | |

| 5 | 20 | 21 | 22 | 23 | |

| 6 | 24 | 25 | 26 | 27 | |

| 7 | 28 | 29 | 30 | 31 | |

| 8 | 32 | 33 | 34 | 35 | |

| 9 | 36 | 37 | 38 | 39 | |

| 10 | 40 | 41 | 42 | 43 | |

| 11 | 44 | 45 | 46 | 47 | |

| 12 | 48 | 49 | 50 | 51 | |

| 13 | 52 | 53 | 54 | 55 | |

| 14 | 56 | 57 | 58 | 59 | |

| 15 | 60 | 61 | 62 | 63 | |

| 16 | 64 | 65 | 66 | 67 | |

| 17 | 68 | 69 | 70 | 71 | |

| 18 | 72 | 73 | 74 | 75 | |

| 19 | 76 | 77 | 78 | 79 | |

| 20 | 80 | 81 | 82 | 83 | |

| 21 | 84 | 85 | 86 | 87 | |

| 22 | 88 | 89 | 90 | 91 | |

| 23 | 92 | 93 | 94 | 95 | |

| 24 | 96 | 97 | 98 | 99 | |

| 25 | 100 | 101 | 102 | 103 | |

| 26 | 104 | 105 | 106 | 107 | |

| 27 | 108 | 109 | 110 | 111 | |

| 28 | 112 | 113 | 114 | 115 | |

| 29 | 116 | 117 | 118 | 119 | |

| 30 | 120 | 121 | 122 | 123 | |

| 31 | 124 | 125 | 126 | 127 | |

| 32 | 128 | 129 | 130 | 131 | |

| 33 | 132 | 133 | 134 | 135 | |

| 34 | 136 | 137 | 138 | 139 | |

| 35 | 140 | 141 | 142 | 143 | |

| 36 | 144 | 145 | 146 | 147 | |

| 37 | 148 | 149 | 150 | 151 | |

| 38 | 152 | 153 | 154 | 155 | |

| 39 | 156 | 157 | 158 | 159 | |

| 40 | 160 | 161 | 162 | 163 | |

| 41 | 164 | 165 | 166 | 167 | |

| 42 | 168 | 169 | 170 | 171 | |

| 43 | 172 | 173 | 174 | 175 | |

| 44 | 176 | 177 | 178 | 179 | |

| 45 | 180 | 181 | 182 | 183 | |

| 46 | 184 | 185 | 186 | 187 | |

| 47 | 188 | 189 | 190 | 191 | |

| 48 | 192 | 193 | 194 | 195 | |

| 49 | 196 | 197 | 198 | 199 | |

| 50 | 200 | 201 | 202 | 203 | |

| 51 | 204 | 205 | 206 | 207 | |

| 52 | 208 | 209 | 210 | 211 | |

| 53 | 212 | 213 | 214 | 215 | |

| 54 | 216 | 217 | 218 | 219 | |

| 55 | 220 | 221 | 222 | 223 | |

| 56 | 224 | 225 | 226 | 227 | |

| 57 | 228 | 229 | 230 | 231 | |

| 58 | 232 | 233 | 234 | 235 | |

| 59 | 236 | 237 | 238 | 239 | |

| 60 | 240 | 241 | 242 | 243 | |

| 61 | 244 | 245 | 246 | 247 | |

| 62 | 248 | 249 | 250 | 251 | |

| 63 | 252 | 253 | 254 | 255 |

To implement shuffling in NTT, the memory content is divided into 16 chunks. The highlighted section in the memory table above shows the 16 chunk start addresses. For example, the first chunk consists of addresses 0, 16, 32, 48. Second chunk has 1, 17, 33, 49, and so on. In NTT mode, memory read pattern is 0, 16, 32, 48, 1, 17, 33, 49, etc. The buffer in NTT module is updated to have two sections and is addressable to support INTT shuffling with matched search space as that of NTT mode.

During shuffling, two levels of randomization are done:

- Chunk order is randomized

- Start address within the selected chunk is also randomized.

With this technique, the search space is 16 ×416=236. For example, if chunk 5 is selected as the starting chunk, the input buffer in NTT mode is configured as below.

| 2151 | 2140 | 2133 | 2122 |

| 1511 | 1500 | 1493 | 1482 |

| 871 | 860 | 853 | 842 |

| 231 | 220 | 213 | 202 |

Then, the order of execution is randomized for NTT as a second level of randomization. For example, order of execution in NTT mode can be (22, 86, 150, 214), (23, 87, 151, 215), (20, 84, 148, 212), (21, 85, 149, 213). Note that, once a random start address is selected, the addresses increment from that point and wrap around until all 4 sets of coefficients have been processed in order. Similarly, once a random chunk is selected, rest of the chunks are processed in order and wrapped around until all chunks are covered. In this example, chunk processing order is 5, 6, 7, 8, …, 15, 0, 1, 2, 3, 4.

For the next chunk, buffer pointer switches to the top half. While the bottom half of the buffer is read and executed, each location of the top half is filled in the same cycle avoiding latency overhead. When all 4 entries of the lower buffer are processed, upper buffer is ready to be fed into BF units.

| 2193 | 2183 | 2173 | 2163 |

|---|---|---|---|

| 1552 | 1542 | 1532 | 1522 |

| 911 | 901 | 891 | 881 |

| 270 | 260 | 250 | 240 |

| 2151 | 2140 | 2133 | 2122 |

| 1511 | 1500 | 1493 | 1482 |

| 871 | 860 | 853 | 842 |

| 231 | 220 | 213 | 202 |

Above figure shows the flow of a shuffled NTT/INTT operation. The shuffler part of the controller is responsible for calculating the correct memory addresses and feed the correct inputs to the BFs.

When shuffling in NTT mode, the memory read and write addresses are computed as shown below.for i in range (0, 4):

Read address \= chunk \+ (i \* RD\_STEP)

Write address = chunk * 4 + (rand_index * WR_STEP)

Where rand_index is the randomized start index of execution order for an NTT operation

E.g. if selected chunk is 5, and rand_index is 2

Order of execution is 2, 3, 0, 1

Read address \= 5 \+ (0\*16), 5 \+ (1\*16), 5+(2\*16), 5+(3\*16) \= 5, 21, 37, 53

Write address \= (5\*4) \+ (2\*1), (5\*4) \+ (3\*1), (5\*4) \+ (0\*1), (5\*4) \+ (1\*1) \= 22, 23, 20, 21

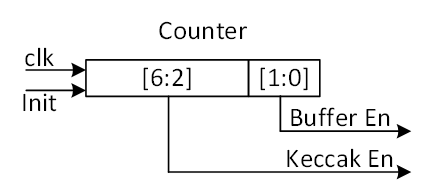

Figure below shows the additional control logic required to maintain the shuffling mechanism.

The index and chunk are obtained from a random number source. Chunk refers to starting chunk number and index refers to start address within the selected chunk. To account for BF latency, the index and chunk must be delayed appropriately (for our design, this latency is 10 cycles) for use in the controller shuffler logic.

The general address calculation for NTT is:

mem read addr=chunk+(countregular*STEPrd)

Since reading memory can be in order, a regular counter is used to read all 4 addresses of the selected chunk.

mem write addr=chunkf10*4+indexf10*STEPwr

The buffer address calculation for NTT is:

buffer write addr=countregular

buffer read addr=countindex

Where f10 refers to delayed values by 10 cycles. In this logic, chunk is updated every 4 cycles and buffer pointer is toggled (to top or bottom half) every 4 cycles.

The general address calculation for INTT is:

mem read addr=(chunk*4)+(index*STEPrd)

mem write addr=chunkf10+countregular*STEPwr

Since writing to memory can be in order, a regular counter is used to write all 4 addresses of the selected chunk.

In INTT, index need not be delayed since the BFs consume the coefficients in the next cycle.

The buffer address calculation for INTT is:

buffer write addr=indexf10

buffer read addr=countregular

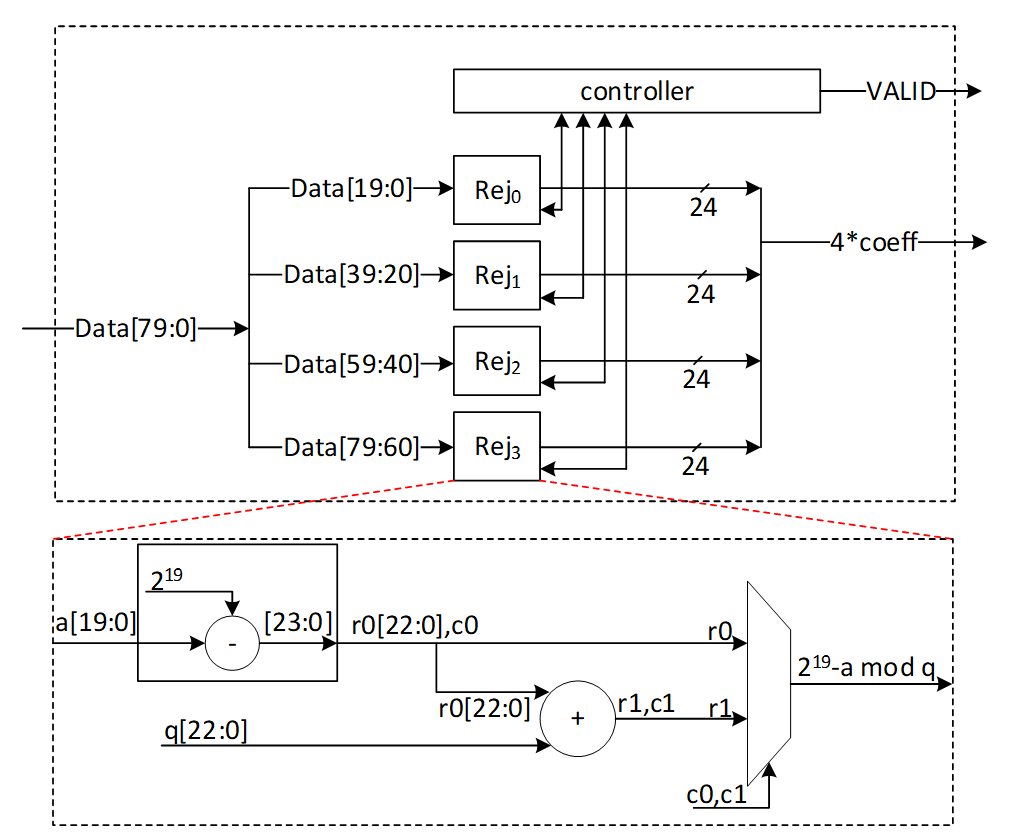

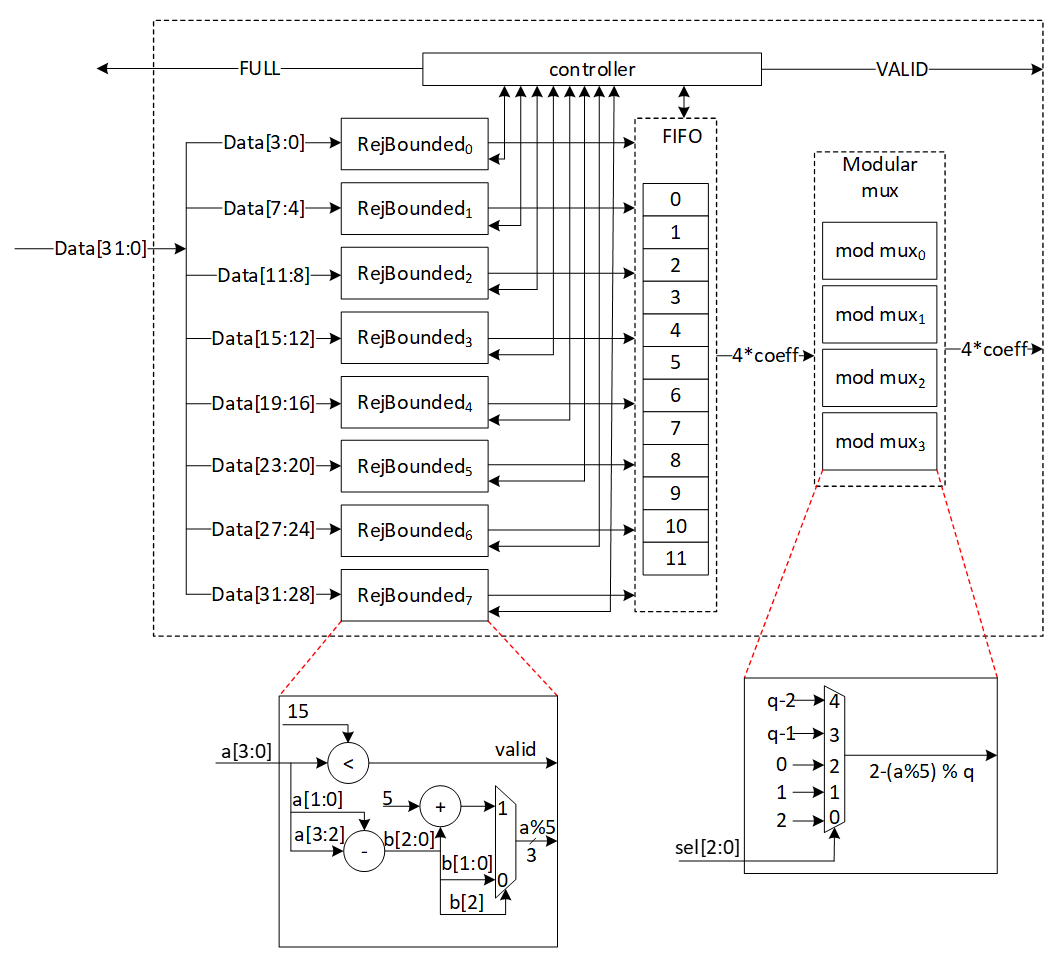

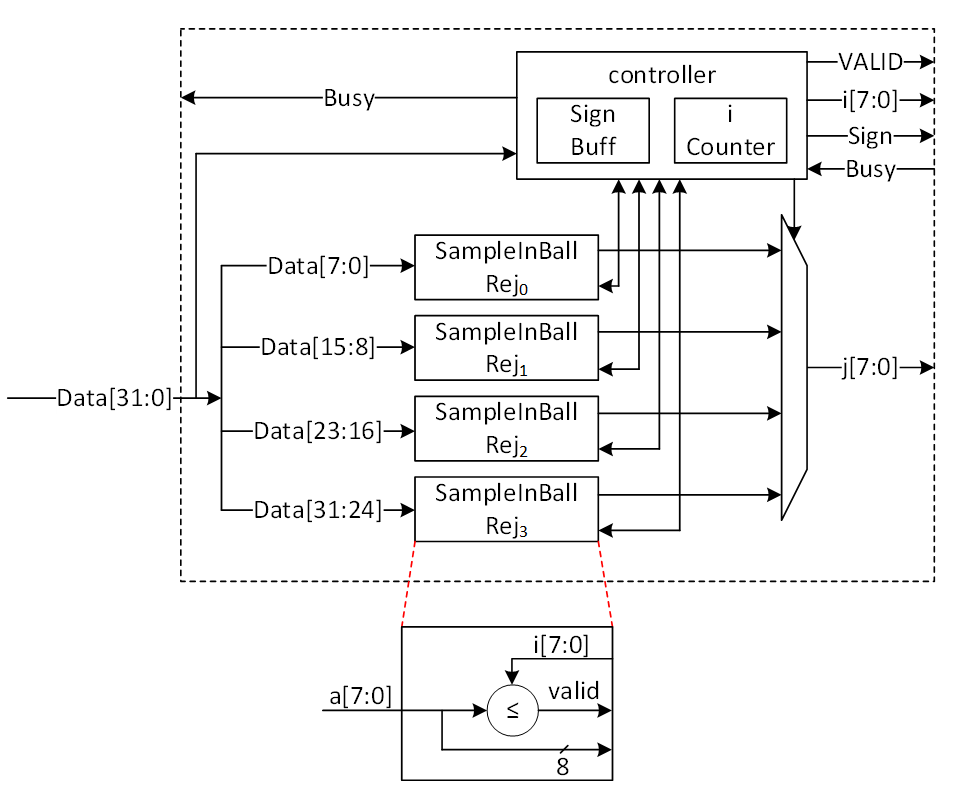

Rejection Sampler architecture

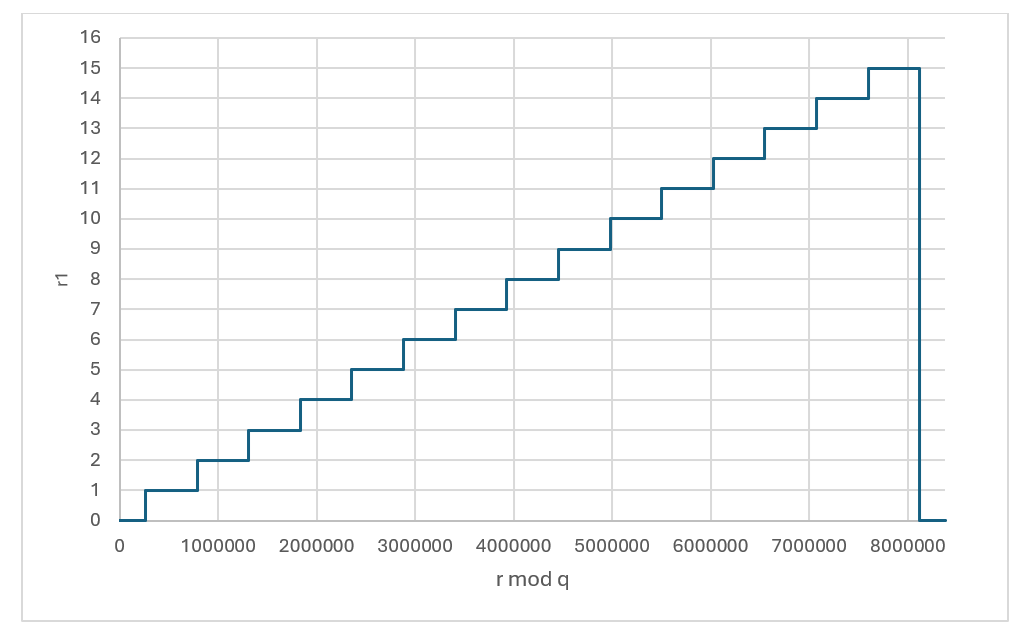

Dilithium (or Kyber) samples the polynomials that make up the vectors and matrices independently, using a fixed seed value and a nonce value that increases the security as the input for Keccak. Keccak is used to take these seed and nonce and generate random stream bits.

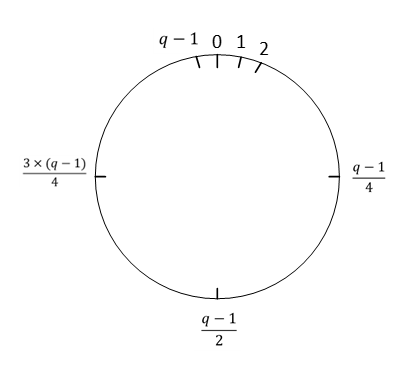

Rejection sampler takes 24-bits (12 bits in case of Kyber) and checks if it is less than the prime number q = 223 −213+1 = 8380417 (q=3369 in case of Kyber). It continues to sample all required coefficients, n=256, for a polynomial.

After sampling a polynomial with 256 coefficients, nonce will be changed and a new random stream will be generated using Keccak core and will be sampled by rejection sampling unit.

The output of this operation results in a matrix of polynomial with k rows and l column while each polynomial includes 256 coefficients.

\[ \begin{bmatrix} A_{0,0} & \cdots & A_{0,l-1} \ \vdots & \ddots & \vdots \ A_{k-1,0} & \cdots & A_{k-1,l-1} \end{bmatrix} _{k*l} \] Rejection sampling is used in all three operations of Dilithium, i.e., keygen, sign, and verify. Since based on the specification of the Dilithium (and Kyber), the sampled coefficients are considered in NTT domain, the output of rejection sampler can directly be used for polynomial multiplication operation, as follows:

\[ \begin{bmatrix} A_{0,0} & \cdots & A_{0,l-1} \ \vdots & \ddots & \vdots \ A_{k-1,0} & \cdots & A_{k-1,l-1} \end{bmatrix} \circ \begin{bmatrix} s_{1,0} \ \vdots \ s_{1,l-1} \end

\begin{bmatrix} A_{0,0} \circ s_{1,0} + \cdots + A_{0,l-1} \circ s_{1,l-1} \ \vdots \ A_{k-1,0} \circ s_{1,0} + \cdots + A_{k-1,l-1} \circ s_{1,l-1} \end{bmatrix} \]

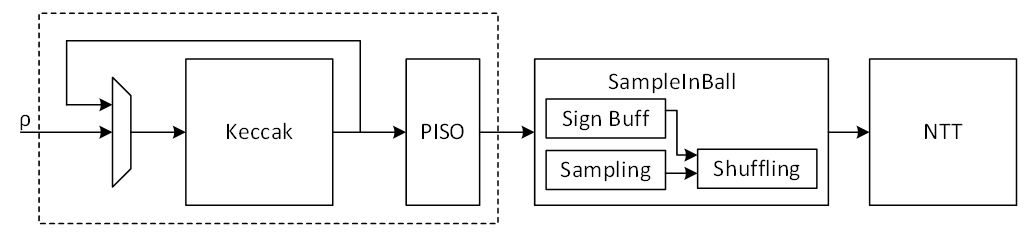

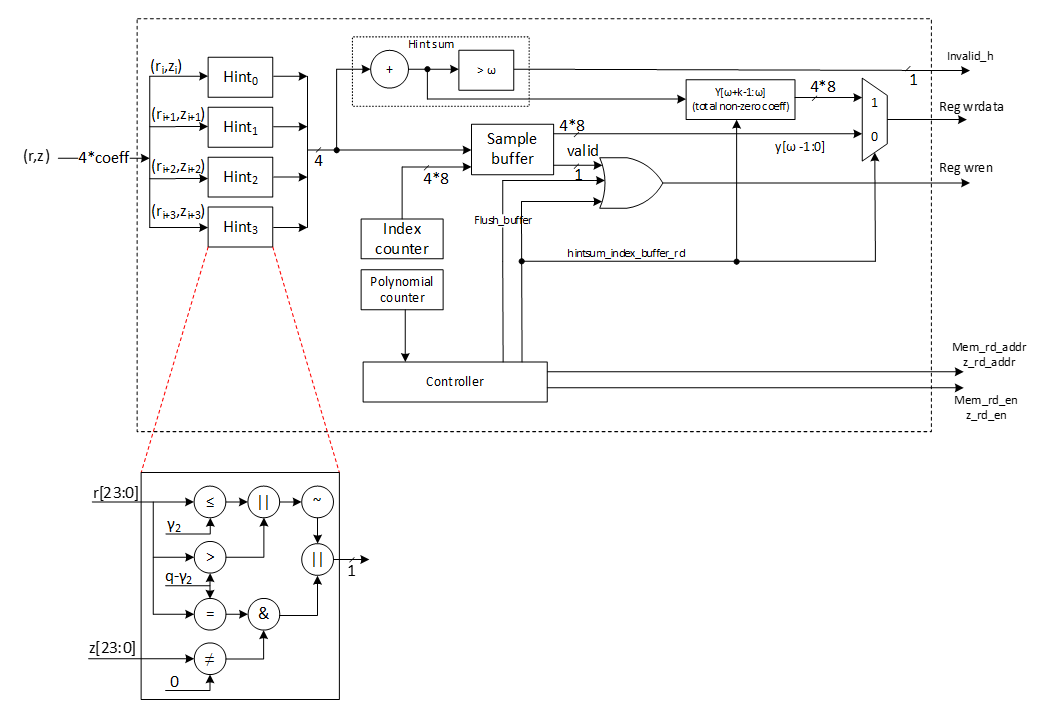

We propose an architecture to remove the cost of memory access from Keccak to rejection sampler, and from rejection sampler to polynomial multiplier. To achieve this, we need to have a balanced throughput between all these modules to avoid large buffering or conflict between them.

High-level architecture is illustrated as follows:

Keccak interface to Rejection Sampler

Keccak is used in SHAKE-128 configuration for rejection sampling operation. Hence, it will take the input data and generates 1344-bit output after each round. We propose implementing of Keccak while each round takes 12 cycles. The format of input data is as follows:

Input data = ρ | j | i

Where is seed with 256-bits, i and j are nonce that describes the row and column number of corresponding polynomial A such that:

Ai,j=Rejection_sampling(Keccak(ρ | j | i))

Since each 24-bit is used for one coefficient, each round of Keccak output provides 1344/24=56 coefficients. To have 256 coefficients for each polynomial (with same seed and nonce), we need to rerun Keccak for at least 5 rounds.

There are two paths for Keccak input. While the input can be set by controller for each new polynomial, the loop path is used to rerun Keccak for completing the previous polynomial.

Rejection sampler cannot take all 1344-bit output parallelly since it makes hardware architecture too costly and complex, and also there is no other input from Keccak for the next 12 cycles. Therefore, we propose a parallel-input serial-output (PISO) unit in between to store the Keccak output and feed rejection unit sequentially.

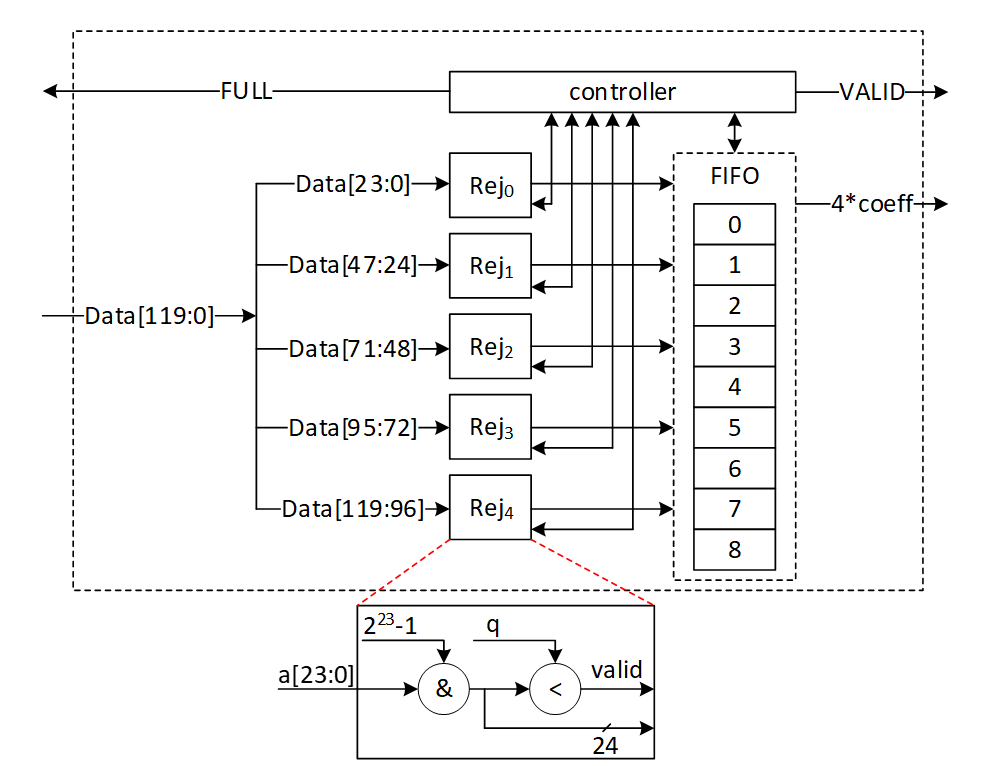

Rejection Sampler

This unit takes data from the output of SHAKE-128 stored in a PISO buffer. The required cycles for this unit are variable due to the non-deterministic pattern of rejection sampling. However, at least 5 Keccak rounds are required to provide 256 coefficients.

In our optimized architecture, this unit works in parallel with the Keccak core. Therefore, the latency for rejection sampling is absorbed within the latency for a concurrently running Keccak core.

Our proposed polynomial multiplier can perform point-wise multiplication on four coefficients per cycle that also helps to avoid memory access challenges and make the control logic too complicated. This implies that the optimal speed of the rejection sampling module is to sample four coefficients without rejection in one cycle.

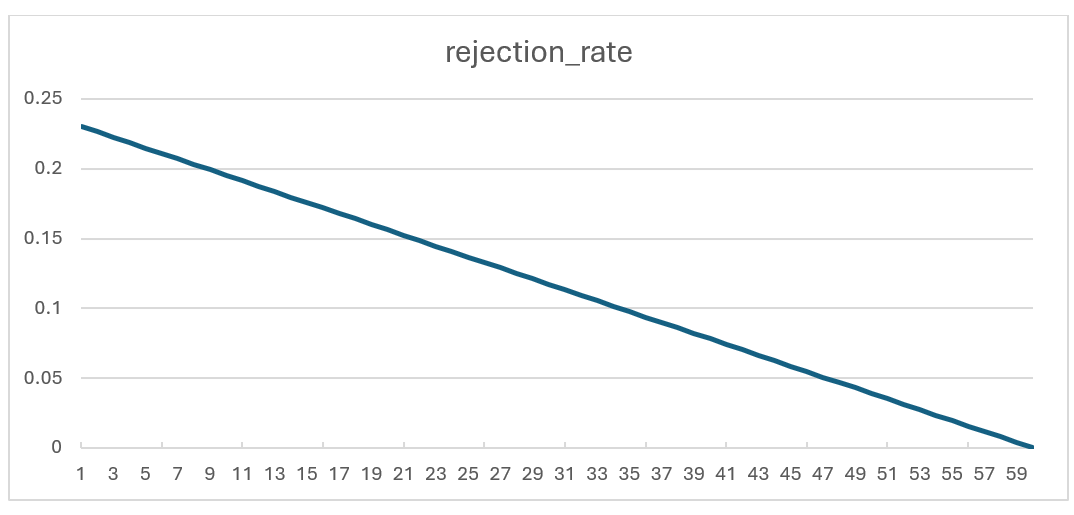

On the output side, as the rejection sampling might fail, the rejection rate for each input is:

\[rejection_rate= 1-q/2^23=1-8380471/2^23=0.0009764≈10^{-3}\]

Hence, the probability of failure to provide 4 appropriate coefficients from 4 inputs would be:

\[1-(1-rejection_rate)^4=0.00399\]

To reduce the failure probability and avoid any wait cycle in polynomial multiplication, 5 coefficients are fed into rejection while only 4 of them will be passed to polynomial multiplication. This decision reduces the probability of failure to

\[ 1 - (\text{probability of having 5 good inputs}) - (\text{probability of having 4 good inputs}) = 1 - (1 - \text{rejection_rate})^5 - \text{rejection_rate} \cdot \binom{5}{4} \cdot (1 - \text{rejection_rate})^4 = 0.00000998≈10^{-5} \]

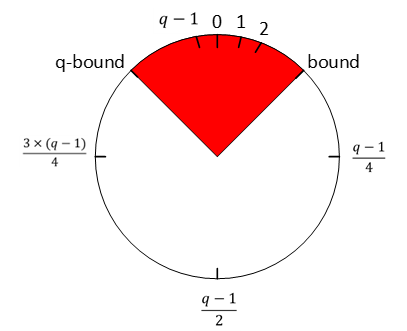

Adding a FIFO to rejection sampling unit can store the remaining unused coefficients and increase the probability of having 4 appropriate coefficients to match polynomial multiplication throughput. The architecture is as follows:

There are 5 rejection sampler circuits corresponding to each 24-bit input. The controller checks if each of these coefficients should be rejected or not. The valid input coefficients can be stored into the FIFO. While maximum 5 coefficients can be fed into FIFO, there are four more entries for the remaining coefficients from the previous cycle. There are several scenarios for the proposed balanced throughput architecture:

- At the very first cycle, or whenever the FIFO is empty, rejection sampling unit may not provide all 4 coefficients for polynomial multiplication unit. We reduce the failure probability of this scenario by feeding 5 coefficients, however, it may happen. So, for designing efficient architecture, instead of reducing the failure probability by adding more hardware cost, we use a VALID output that stops polynomial multiplier until all 4 required coefficients are sampled.

- If all 5 inputs are valid, they are going to be stored into FIFO. The first 4 coefficients will be sent to polynomial multiplication unit, while the remaining coefficients will be shifted to head of FIFO and be used for the next cycle with the first 3 valid coefficients from the next cycle.

- The maximum depth of FIFO is 9 entries. If all 9 FIFO entries are full, rejection sampling unit can provide the required output for the next cycles too. Hence, it does not accept a new input from Keccak core by raising FULL flag.

| Cycle count | Input from PISO | FIFO valid entries from previous cycle | Total valid samples | output | FIFO remaining for the next cycle |

|---|---|---|---|---|---|

| 0 | 5 | 0 | 5 | 4 | 1 |

| 1 | 5 | 1 | 6 | 4 | 2 |

| 2 | 5 | 2 | 7 | 4 | 3 |

| 3 | 5 | 3 | 8 | 4 | 4 |

| 4 | 5 | 4 | 9 | 4 | 5 (FULL) |

| 5 | 0 | 5 | 5 | 4 | 1 |

| 6 | 5 | 1 | 6 | 4 | 2 |

- If there is not FULL condition for reading from Keccak, all PISO data can be read in 12 cycles, including 11 cycles with 5 coefficients and 1 cycle for the 56th coefficient. This would be match with Keccak throughput that generates 56 coefficients per 12 cycles.

- The maximum number of FULL conditions is when there are no rejected coefficients for all 56 inputs. In this case, after 5 cycles with 5 coefficients, there is one FULL condition. After 12 cycles, 50 coefficients are processed by rejection sampling unit, and there are still 6 coefficients inside PISO. To maximize the utilization factor of our hardware resources, Keccak core will check the PISO status. If PISO contains 5 coefficients or more (the required inputs for rejection sampling unit), EMPTY flag will not be set, and Keccak will wait until the next cycle. Hence, rejection sampling unit takes 13 cycles to process 55 coefficients, and the last coefficients will be combined with the next Keccak round to be processed.

Performance of SampleRejq

For processing each round of Keccak using rejection sampling unit, we need 12 to 13 cycles that result in 60-65 cycles for each polynomial with 256 coefficients.

For a complete rejection sampling for Dilithium ML-DSA-87 with 8*7=56 polynomials, 3360 to 3640 cycles are required using sequential operation. However, our design can be duplicated to enable parallel sampling for two different polynomials. Having two parallel design results in 1680 to 1820 cycles, while three parallel design results in 1120 to 1214 cycles at the cost of more resource utilization.

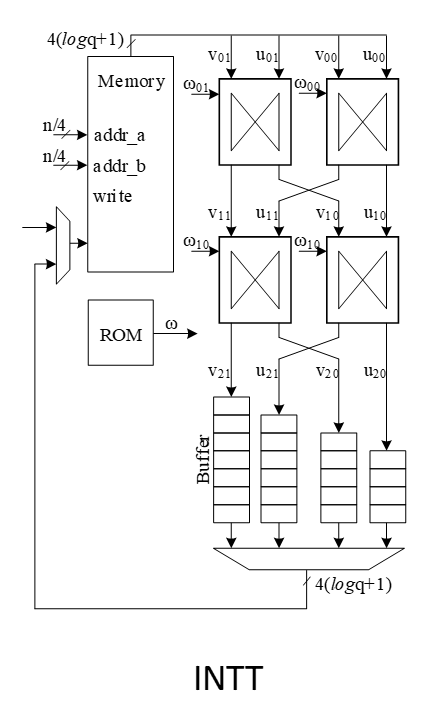

INTT architecture

A merged-layer INTT technique uses two pipelined stages with two parallel cores in each stage level, making 4 butterfly cores in total. The parallel pipelined butterfly cores enable us to perform Radix-4 INTT operation with 4 parallel coefficients.

However, INTT requires a specific memory pattern that may limit the efficiency of the butterfly operation. For Dilithium use case, there are n=256 coefficients per polynomial that requires log n=8 layers of INTT operations. Each butterfly unit takes two coefficients while difference between the indexes is 2i-1 in ith stage. That means for the first stage, the given indexes for each butterfly unit are (2*k, 2*k+1):

Stage 1 input indexes: {(0, 1), {2, 3), (4, 5), …, (254, 255)}

Stage 2 input indexes: {(0, 2), {1, 3), (4, 6), …, (61, 63), (64, 66), (65, 67), …, (253, 255)}

…

Stage 8 input indexes: {(0, 128), {1, 129), (2, 130), …, (127, 255)}

There are several considerations for that:

- We need access to 4 coefficients per cycle to match the throughput into 2×2 butterfly units.

- An optimized architecture provides a memory with only one reading port, and one writing port.

- Based on the previous two notes, each memory address contains 4 coefficients.

- The initial coefficients are stored sequentially by NTT. Specifically, they begin with 0 and continue incrementally up to 255. Hence, at the very beginning cycle, the memory contains (0, 1, 2, 3) in the first address, (4, 5, 6, 7) in second address, and so on.

- The cost of in-place memory relocation to align the memory content is not negligible. Particularly, it needs to be repeated for each stage.

While memory bandwidth limits the efficiency of the butterfly operation, we use a specific memory pattern to store four coefficients per address.

We propose a pipeline architecture the read memory in a particular pattern and using a set of buffers, the corresponding coefficients are fed into INTT block.

The initial memory contains the indexes as follows:

| Address | Initial Memory Content | |||

|---|---|---|---|---|

| 0 | 0 | 1 | 2 | 3 |

| 1 | 4 | 5 | 6 | 7 |

| 2 | 8 | 9 | 10 | 11 |

| 3 | 12 | 13 | 14 | 15 |

| 4 | 16 | 17 | 18 | 19 |

| 5 | 20 | 21 | 22 | 23 |

| 6 | 24 | 25 | 26 | 27 |

| 7 | 28 | 29 | 30 | 31 |

| 8 | 32 | 33 | 34 | 35 |

| 9 | 36 | 37 | 38 | 39 |

| 10 | 40 | 41 | 42 | 43 |

| 11 | 44 | 45 | 46 | 47 |

| 12 | 48 | 49 | 50 | 51 |

| 13 | 52 | 53 | 54 | 55 |

| 14 | 56 | 57 | 58 | 59 |

| 15 | 60 | 61 | 62 | 63 |

| 16 | 64 | 65 | 66 | 67 |

| 17 | 68 | 69 | 70 | 71 |

| 18 | 72 | 73 | 74 | 75 |

| 19 | 76 | 77 | 78 | 79 |

| 20 | 80 | 81 | 82 | 83 |

| 21 | 84 | 85 | 86 | 87 |

| 22 | 88 | 89 | 90 | 91 |

| 23 | 92 | 93 | 94 | 95 |

| 24 | 96 | 97 | 98 | 99 |

| 25 | 100 | 101 | 102 | 103 |

| 26 | 104 | 105 | 106 | 107 |

| 27 | 108 | 109 | 110 | 111 |

| 28 | 112 | 113 | 114 | 115 |

| 29 | 116 | 117 | 118 | 119 |

| 30 | 120 | 121 | 122 | 123 |

| 31 | 124 | 125 | 126 | 127 |

| 32 | 128 | 129 | 130 | 131 |

| 33 | 132 | 133 | 134 | 135 |

| 34 | 136 | 137 | 138 | 139 |

| 35 | 140 | 141 | 142 | 143 |

| 36 | 144 | 145 | 146 | 147 |

| 37 | 148 | 149 | 150 | 151 |

| 38 | 152 | 153 | 154 | 155 |

| 39 | 156 | 157 | 158 | 159 |

| 40 | 160 | 161 | 162 | 163 |

| 41 | 164 | 165 | 166 | 167 |

| 42 | 168 | 169 | 170 | 171 |

| 43 | 172 | 173 | 174 | 175 |

| 44 | 176 | 177 | 178 | 179 |

| 45 | 180 | 181 | 182 | 183 |

| 46 | 184 | 185 | 186 | 187 |

| 47 | 188 | 189 | 190 | 191 |

| 48 | 192 | 193 | 194 | 195 |

| 49 | 196 | 197 | 198 | 199 |

| 50 | 200 | 201 | 202 | 203 |

| 51 | 204 | 205 | 206 | 207 |

| 52 | 208 | 209 | 210 | 211 |

| 53 | 212 | 213 | 214 | 215 |

| 54 | 216 | 217 | 218 | 219 |

| 55 | 220 | 221 | 222 | 223 |

| 56 | 224 | 225 | 226 | 227 |

| 57 | 228 | 229 | 230 | 231 |

| 58 | 232 | 233 | 234 | 235 |

| 59 | 236 | 237 | 238 | 239 |

| 60 | 240 | 241 | 242 | 243 |

| 61 | 244 | 245 | 246 | 247 |

| 62 | 248 | 249 | 250 | 251 |

| 63 | 252 | 253 | 254 | 255 |

Suppose memory is read in the regular pattern:

Reading Addr: 0, 1, 2, 3, 4, …, 62, 63

The input goes to the butterfly architecture. The input values contain the required coefficients for our butterfly units in the next stage, i.e., (0, 1) and (2, 3). Since we merged the first and second layers of INTT stages, the output of the first parallel butterfly units need to exchange one coefficient and then the required input for the second parallel set of butterfly units is ready, i.e., (0, 2) and (1, 3).

To prepare the results for the next stages, the output needs to be stored into the customized buffer architecture as follows:

| 0 | → | |||||||

|---|---|---|---|---|---|---|---|---|

| 1 | ||||||||

| 2 | ||||||||

| 3 |

Cycle 0 after butterfly reading address 0

| 4 | → | 0 | ||||||

|---|---|---|---|---|---|---|---|---|

| 5 | 1 | |||||||

| 6 | 2 | |||||||

| 7 | 3 |

Cycle 0 after butterfly reading address 1

| 8 | → | 4 | 0 | |||||

|---|---|---|---|---|---|---|---|---|

| 9 | 5 | 1 | ||||||

| 10 | 6 | 2 | ||||||

| 11 | 7 | 3 |

Cycle 2 after butterfly reading address 2

| 12 | → | 8 | 4 | 0 | ||||

|---|---|---|---|---|---|---|---|---|

| 13 | 9 | 5 | 1 | |||||

| 14 | 10 | 6 | 2 | |||||

| 15 | 11 | 7 | 3 |

Cycle 3 after butterfly reading address 3

| 16 | → | 12 | 8 | 4 | 0 | |||

|---|---|---|---|---|---|---|---|---|

| 17 | 13 | 9 | 5 | 1 | ||||

| 18 | 14 | 10 | 6 | 2 | ||||

| 19 | 15 | 11 | 7 | 3 |

Cycle 4 after butterfly reading address 4

| 20 | → | 16 | 12 | 8 | 4 | |||

|---|---|---|---|---|---|---|---|---|

| 21 | 17 | 13 | 9 | 5 | 1 | |||

| 22 | 18 | 14 | 10 | 6 | 2 | |||

| 23 | 19 | 15 | 11 | 7 | 3 |

Cycle 5 after butterfly reading address 5

The highlighted value in the first buffer contains the required coefficients for our butterfly units in the next stage, i.e., (0, 4) and (8, 12).

However, we need to write the output in a particular pattern as follows:

Writing Addr: 0, 16, 32, 48, 1, 17, 33, 49, …, 15, 31, 47, 63

After completing the first round of operation including INTT stage 1 and 2, the memory contains the following data:

| Address | Memory Content after 1&2 stages | |||

|---|---|---|---|---|

| 0 | 0 | 4 | 8 | 12 |

| 1 | 16 | 20 | 24 | 28 |

| 2 | 32 | 36 | 40 | 44 |

| 3 | 48 | 52 | 56 | 60 |

| 4 | 64 | 68 | 72 | 76 |

| 5 | 80 | 84 | 88 | 92 |

| 6 | 96 | 100 | 104 | 108 |

| 7 | 112 | 116 | 120 | 124 |

| 8 | 128 | 132 | 136 | 140 |

| 9 | 144 | 148 | 152 | 156 |

| 10 | 160 | 164 | 168 | 172 |

| 11 | 176 | 180 | 184 | 188 |

| 12 | 192 | 196 | 200 | 204 |

| 13 | 208 | 212 | 216 | 220 |

| 14 | 224 | 228 | 232 | 236 |

| 15 | 240 | 244 | 248 | 252 |

| 16 | 1 | 5 | 9 | 13 |

| 17 | 17 | 21 | 25 | 29 |

| 18 | 33 | 37 | 41 | 45 |

| 19 | 49 | 53 | 57 | 61 |

| 20 | 65 | 69 | 73 | 77 |

| 21 | 81 | 85 | 89 | 93 |

| 22 | 97 | 101 | 105 | 109 |

| 23 | 113 | 117 | 121 | 125 |

| 24 | 129 | 133 | 137 | 141 |

| 25 | 145 | 149 | 153 | 157 |

| 26 | 161 | 165 | 169 | 173 |

| 27 | 177 | 181 | 185 | 189 |

| 28 | 193 | 197 | 201 | 205 |

| 29 | 209 | 213 | 217 | 221 |

| 30 | 225 | 229 | 233 | 237 |

| 31 | 241 | 245 | 249 | 253 |

| 32 | 2 | 6 | 10 | 14 |

| 33 | 18 | 22 | 26 | 30 |

| 34 | 34 | 38 | 42 | 46 |

| 35 | 50 | 54 | 58 | 62 |

| 36 | 66 | 70 | 74 | 78 |

| 37 | 82 | 86 | 90 | 94 |

| 38 | 98 | 102 | 106 | 110 |

| 39 | 114 | 118 | 122 | 126 |

| 40 | 130 | 134 | 138 | 142 |

| 41 | 146 | 150 | 154 | 158 |

| 42 | 162 | 166 | 170 | 174 |

| 43 | 178 | 182 | 186 | 190 |

| 44 | 194 | 198 | 202 | 206 |

| 45 | 210 | 214 | 218 | 222 |

| 46 | 226 | 230 | 234 | 238 |

| 47 | 242 | 246 | 250 | 254 |

| 48 | 3 | 7 | 11 | 15 |

| 49 | 19 | 23 | 27 | 31 |

| 50 | 35 | 39 | 43 | 47 |

| 51 | 51 | 55 | 59 | 63 |

| 52 | 67 | 71 | 75 | 79 |

| 53 | 83 | 87 | 91 | 95 |

| 54 | 99 | 103 | 107 | 111 |

| 55 | 115 | 119 | 123 | 127 |

| 56 | 131 | 135 | 139 | 143 |

| 57 | 147 | 151 | 155 | 159 |

| 58 | 163 | 167 | 171 | 175 |

| 59 | 179 | 183 | 187 | 191 |

| 60 | 195 | 199 | 203 | 207 |

| 61 | 211 | 215 | 219 | 223 |

| 62 | 227 | 231 | 235 | 239 |

| 63 | 243 | 247 | 251 | 255 |

The same process can be applied in the next round to perform INTT stage 3 and 4.

| 0 | → | |||||||

|---|---|---|---|---|---|---|---|---|

| 4 | ||||||||

| 8 | ||||||||

| 12 |

Cycle 0 after butterfly reading address 0

| 16 | → | 0 | ||||||

|---|---|---|---|---|---|---|---|---|

| 20 | 4 | |||||||

| 24 | 8 | |||||||

| 28 | 12 |

Cycle 1 after butterfly reading address 1

| 32 | → | 16 | 0 | |||||

|---|---|---|---|---|---|---|---|---|

| 36 | 20 | 4 | ||||||

| 40 | 24 | 8 | ||||||

| 44 | 28 | 12 |

Cycle 2 after butterfly reading address 2

| 48 | → | 32 | 16 | 0 | ||||

|---|---|---|---|---|---|---|---|---|

| 52 | 36 | 20 | 4 | |||||

| 56 | 40 | 24 | 8 | |||||

| 60 | 44 | 28 | 12 |

Cycle 3 after butterfly reading address 3

| 64 | → | 48 | 32 | 16 | 0 | |||

|---|---|---|---|---|---|---|---|---|

| 68 | 52 | 36 | 20 | 4 | ||||

| 72 | 56 | 40 | 24 | 8 | ||||

| 76 | 60 | 44 | 28 | 12 |

Cycle 4 after butterfly reading address 4

| 80 | → | 64 | 48 | 32 | 16 | |||

|---|---|---|---|---|---|---|---|---|

| 84 | 68 | 52 | 36 | 20 | 4 | |||

| 88 | 72 | 56 | 40 | 24 | 8 | |||

| 92 | 76 | 60 | 44 | 28 | 12 |

Cycle 5 after butterfly reading address 5

After completing all stages, the memory contents would be as follows:

| Address | Memory Content after Stage 7&8 | |||

|---|---|---|---|---|

| 0 | 0 | 1 | 2 | 3 |

| 1 | 4 | 5 | 6 | 7 |

| 2 | 8 | 9 | 10 | 11 |

| 3 | 12 | 13 | 14 | 15 |

| 4 | 16 | 17 | 18 | 19 |

| 5 | 20 | 21 | 22 | 23 |

| 6 | 24 | 25 | 26 | 27 |

| 7 | 28 | 29 | 30 | 31 |

| 8 | 32 | 33 | 34 | 35 |

| 9 | 36 | 37 | 38 | 39 |

| 10 | 40 | 41 | 42 | 43 |

| 11 | 44 | 45 | 46 | 47 |

| 12 | 48 | 49 | 50 | 51 |

| 13 | 52 | 53 | 54 | 55 |

| 14 | 56 | 57 | 58 | 59 |

| 15 | 60 | 61 | 62 | 63 |

| 16 | 64 | 65 | 66 | 67 |

| 17 | 68 | 69 | 70 | 71 |

| 18 | 72 | 73 | 74 | 75 |

| 19 | 76 | 77 | 78 | 79 |

| 20 | 80 | 81 | 82 | 83 |

| 21 | 84 | 85 | 86 | 87 |

| 22 | 88 | 89 | 90 | 91 |

| 23 | 92 | 93 | 94 | 95 |

| 24 | 96 | 97 | 98 | 99 |

| 25 | 100 | 101 | 102 | 103 |

| 26 | 104 | 105 | 106 | 107 |

| 27 | 108 | 109 | 110 | 111 |

| 28 | 112 | 113 | 114 | 115 |

| 29 | 116 | 117 | 118 | 119 |

| 30 | 120 | 121 | 122 | 123 |

| 31 | 124 | 125 | 126 | 127 |

| 32 | 128 | 129 | 130 | 131 |

| 33 | 132 | 133 | 134 | 135 |

| 34 | 136 | 137 | 138 | 139 |

| 35 | 140 | 141 | 142 | 143 |

| 36 | 144 | 145 | 146 | 147 |

| 37 | 148 | 149 | 150 | 151 |

| 38 | 152 | 153 | 154 | 155 |

| 39 | 156 | 157 | 158 | 159 |

| 40 | 160 | 161 | 162 | 163 |

| 41 | 164 | 165 | 166 | 167 |

| 42 | 168 | 169 | 170 | 171 |

| 43 | 172 | 173 | 174 | 175 |

| 44 | 176 | 177 | 178 | 179 |

| 45 | 180 | 181 | 182 | 183 |

| 46 | 184 | 185 | 186 | 187 |

| 47 | 188 | 189 | 190 | 191 |

| 48 | 192 | 193 | 194 | 195 |

| 49 | 196 | 197 | 198 | 199 |

| 50 | 200 | 201 | 202 | 203 |

| 51 | 204 | 205 | 206 | 207 |

| 52 | 208 | 209 | 210 | 211 |

| 53 | 212 | 213 | 214 | 215 |

| 54 | 216 | 217 | 218 | 219 |

| 55 | 220 | 221 | 222 | 223 |

| 56 | 224 | 225 | 226 | 227 |

| 57 | 228 | 229 | 230 | 231 |

| 58 | 232 | 233 | 234 | 235 |

| 59 | 236 | 237 | 238 | 239 |

| 60 | 240 | 241 | 242 | 243 |

| 61 | 244 | 245 | 246 | 247 |

| 62 | 248 | 249 | 250 | 251 |

| 63 | 252 | 253 | 254 | 255 |

The proposed method saves the time needed for shuffling and reordering, while using only a little more memory.

INTT shuffling countermeasure

Similar to NTT operation, INTT requires shuffling the order of execution of coefficients in order to mitigate SCA attacks. Refer to section 5.3.3 for details on NTT shuffling. In INTT mode, the chunks are split in the following pattern into 16 chunks:

| Address | 1&2 | ||||

|---|---|---|---|---|---|

| 0 | 0 | 1 | 2 | 3 | |

| 1 | 4 | 5 | 6 | 7 | |

| 2 | 8 | 9 | 10 | 11 | |

| 3 | 12 | 13 | 14 | 15 | |

| 4 | 16 | 17 | 18 | 19 | |

| 5 | 20 | 21 | 22 | 23 | |

| 6 | 24 | 25 | 26 | 27 | |

| 7 | 28 | 29 | 30 | 31 | |

| 8 | 32 | 33 | 34 | 35 | |

| 9 | 36 | 37 | 38 | 39 | |

| 10 | 40 | 41 | 42 | 43 | |

| 11 | 44 | 45 | 46 | 47 | |

| 12 | 48 | 49 | 50 | 51 | |

| 13 | 52 | 53 | 54 | 55 | |

| 14 | 56 | 57 | 58 | 59 | |

| 15 | 60 | 61 | 62 | 63 | |

| 16 | 64 | 65 | 66 | 67 | |

| 17 | 68 | 69 | 70 | 71 | |

| 18 | 72 | 73 | 74 | 75 | |

| 19 | 76 | 77 | 78 | 79 | |

| 20 | 80 | 81 | 82 | 83 | |

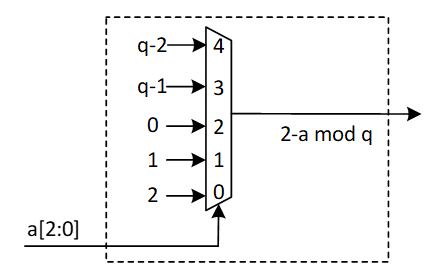

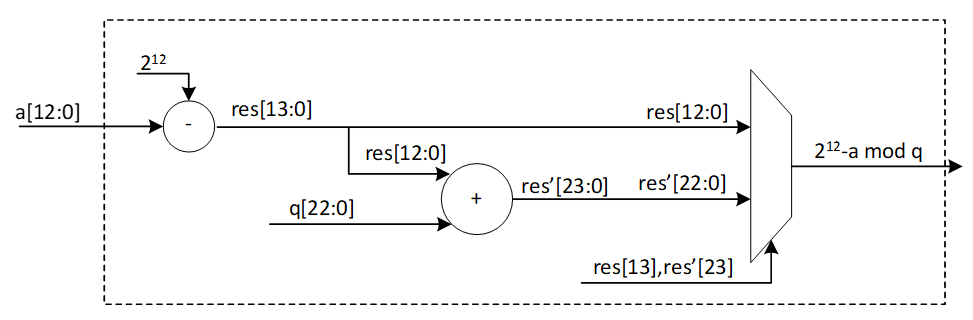

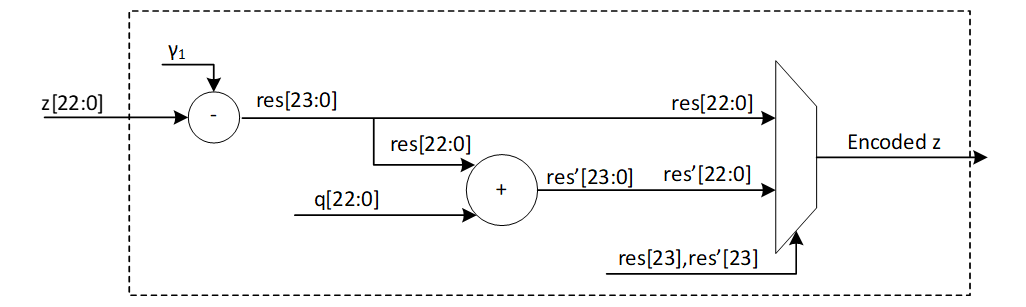

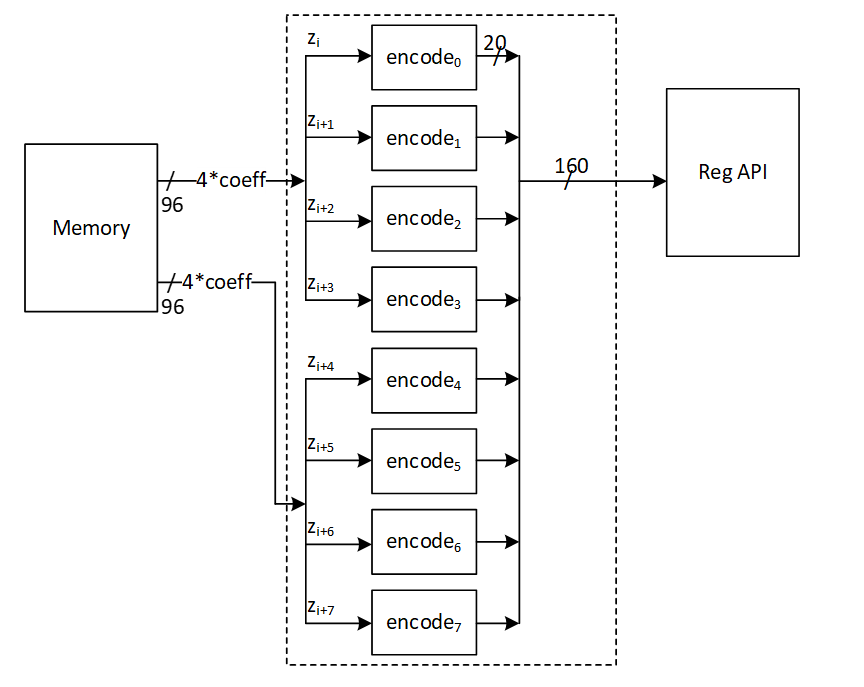

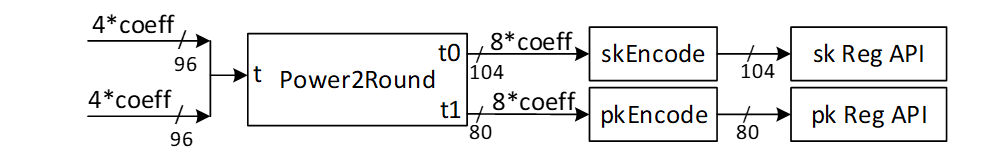

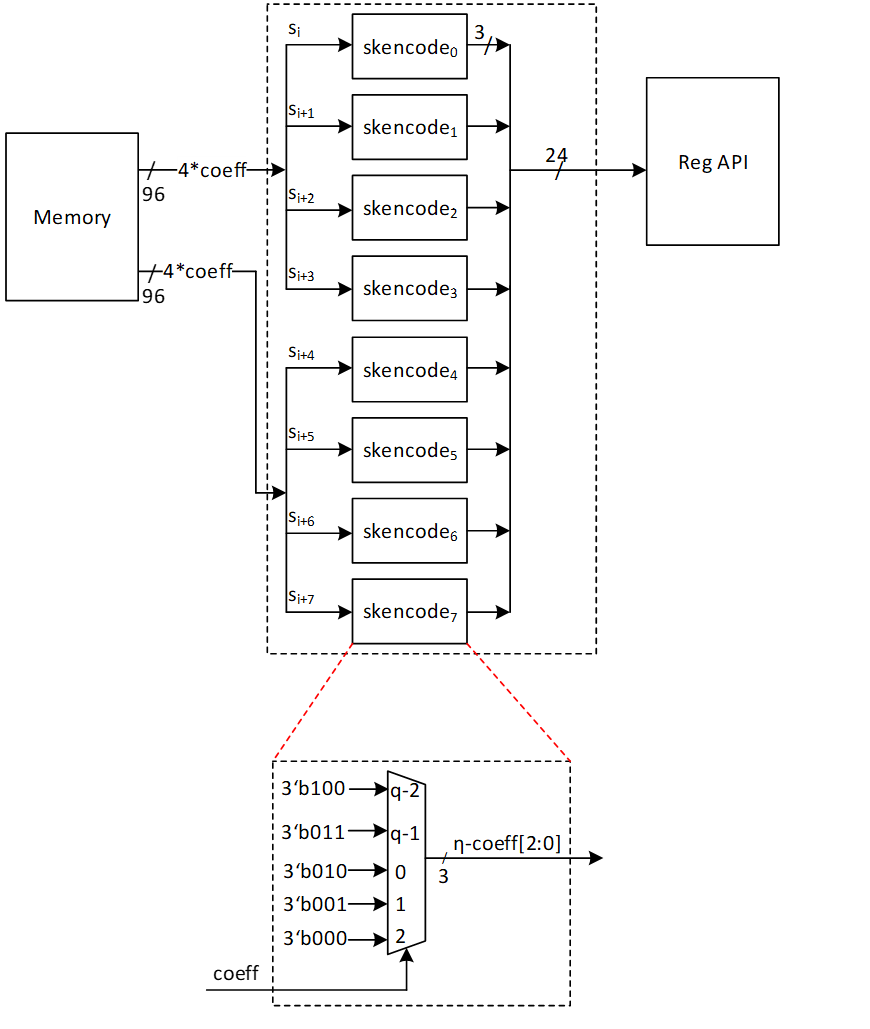

| 21 | 84 | 85 | 86 | 87 | |